Firing Up 75K VMs On OpenStack In An Afternoon

Running distributed applications on cloud computing capacity means, in theory, that IT shops never have to do capacity planning again. For large enough aggregations of lines of business inside of a company or enterprises that share capacity on a public cloud, the ups and downs across time zones and workloads should all balance out.

This is precisely what Microsoft executives recently told EnterpriseTech was one of the key benefits of hyperscale cloud computing, and added that this was something that very few companies in the public cloud arena could offer. (Amazon Web Services, Google Compute Engine, and Microsoft Azure has massive scale for sure, and IBM SoftLayer, Rackspace Hosting, and a bunch of others are working on it.) Even with excess capacity, all cloud builders need to be able to get more capacity online relatively quickly, whether it is the internal IT department or your public cloud provider.

To give potential customers an idea of how quickly cloud capacity can be brought up on bare metal, commercial Linux distributor Canonical, which provides support for the Ubuntu Server variant of Linux, and AMD, which peddles the SeaMicro line of microservers, recently put the Ubuntu OpenStack variant of the popular open source cloud controller through the paces. The benchmark tests that the two companies ran, which were revealed at this week's OpenStack Summit in Atlanta, showed a cluster of machines could be configured with a whopping 75,000 virtual machines in 6.5 hours using the combination of management tools from both vendors.

This sure beats trying to build and deploy images by hand.

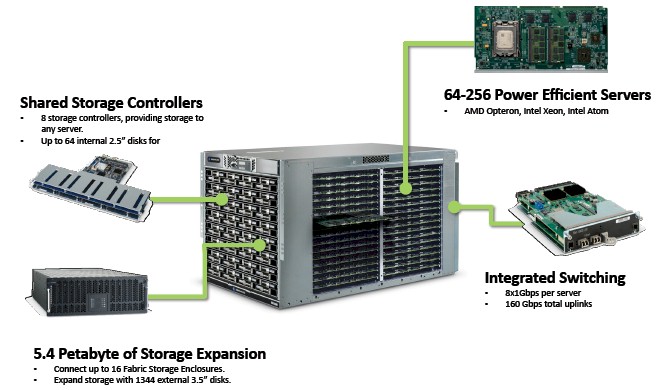

The tests were run on the latest SeaMicro 15000 microservers from AMD, which were configured with a mix of Xeon E3 processors from Intel and custom Opteron processors from AMD. The SM15000 servers were launched back in September 2012 and the most current process nodes used on the machine are based on Intel's "Haswell" Xeon E3-1200 v3 processors and a custom Opteron 4300 chip, using its "Piledriver" cores, that it has not made available to other customers. The SeaMicro processor cards use a set of dual PCI-Express mechanicals to link the nodes into the midplane of the chassis and have a custom 3D torus interconnect, called the Freedom Fabric and sporting 1.28 Tb/sec of bandwidth, that links the nodes to each other, to storage inside the chassis and hanging off it through external disk enclosures, and to Ethernet ports that reach to the outside world to serve up applications. Here is what the machine looks like, if you are unfamiliar with the architecture:

The heart of the original SM10000 systems and their SM15000 follow-ons is a custom ASIC that virtualizes the network links for the server nodes as well as creating that 3D torus interconnect and doing load balancing across the nodes. With the SM15000 design, AMD brought huge blocks of storage under the control of the system. The SM15000 chassis has room for 64 disk drives – one for each physical server card – and up to sixteen external disk enclosures, each capable of holding 84 disk drives. That is a total of 1,344 external disks for a maximum capacity of 5.37 PB per single SM15000 enclosure.

For the OpenStack tests, AMD took nine enclosures, for a total of 576 nodes. The Xeon E3 chips have four cores per socket and the custom Opterons have eight core per socket; AMD did not provide the specific core count used on the test, but it is safe to say that it was at least somewhere between 2,304 and 4,608 cores. These server nodes were equipped with the Ubuntu Server 14.04 LTS version of Linux, which was announced last month. The Ubuntu Server operating system has a physical server provision tool called Metal as a Service, or MaaS, which integrates with the RESTful APIs of the SeaMicro management interface to get Ubuntu Server automagically deployed on each of those server nodes. Having fired up the nodes with Ubuntu Server, which includes the latest "Icehouse" release of the OpenStack cloud controller that was also announced last month, the Juju software installation and configuration orchestration engine that is also part of Ubuntu Server was set loose to create VMs.

On the test, AMD and Canonical were able to get 75,000 virtual machines working on 320 nodes in the cluster of SM15000 servers in 6.5 hours. That was 1.5 hours quicker than earlier OpenStack tests, according to Canonical. The two companies kept pushing and were able to get 100,000 VMs running on 380 hosts in 11 hours. The system was able to configured the full 576 nodes in the SM15000 machines with a maximum of 168,000 VMs. AMD and Canonical did not provide the time to do this, but clearly as the operating systems, KVM hypervisors, and VMs were installed and managed, successive additions of VMs took longer. (It is not clear what running workloads were on the VMs by the way. Presumably they were not just static VMs just sitting there doing nothing.) If the work scaled linearly, then it would take about a day to get all 168,000 of those VMs running and the cluster settling in at being able to deploy somewhere under 5,000 VMs per hour once after the initial burst.

Whatever the rate, the scale that AMD and Canonical have demonstrated in their OpenStack configuration benchmark is a lot more capacity than the majority of production OpenStack have running in their datacenters, as you can see from our report this week on the feeds and speeds of the OpenStack installed base.

Among the organizations surveyed by the OpenStack Foundation, only about a third of the OpenStack clouds out there are in production (as distinct from being used for software development and testing or being a proof of concept), and of these only a little more than 10 percent of the machines had 500 nodes or more in their clusters. However, about a fifth of the production OpenStack users had more than 1,000 cores in their clusters, in the same rough range as the nine enclosures tested by AMD and Canonical.

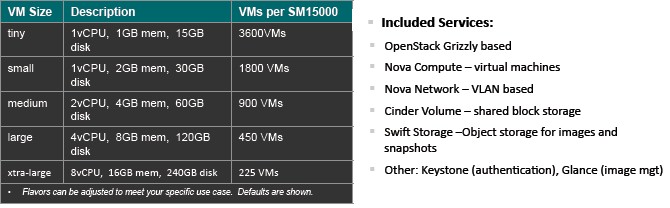

AMD put out a reference architecture explaining the way OpenStack is deployed on top of the SeaMicro iron last October, and one of the big benefits is that you can deploy the controllers for the Nova compute, Swift object storage, and Cinder block storage services all inside the same enclosures as the compute and storage nodes. Disks are not tied to any specific node, but rather are virtually linked to nodes through the Freedom fabric; in other microserver architectures, disks are sometimes physically tied to nodes, and this can result in a bad balance for the ratio of disks to compute. Here are the recommended VM densities on the SM15000 from AMD:

For nine enclosures to be able to be set up with 168,000, these must have been very skinny server slices indeed – something on the order of five times skinnier on the CPU and memory than the Tiny instances referred to on the table above. But again, the test was not to gauge the performance of the VMs, but rather the speed at which OpenStack could be deployed on the SeaMicro systems. For the DevOps team on a private or public cloud, this matters as much as the performance of the cloud itself running applications.