Nvidia Taps Into Generative AI Fervor with Unveiling of AI Foundations Cloud Services

(Source: Nvidia)

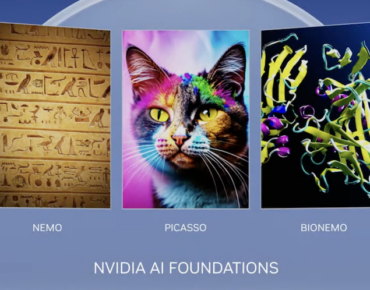

Nvidia is offering a new foundational platform for companies looking to leverage generative AI. The company introduced the new platform called Nvidia AI Foundations at its GTC Developer Conference.

Nvidia Founder and CEO Jensen Huang says we are at an iPhone moment with the mass adoption of generative AI, and the industry needs a foundry, along the lines of famed chip manufacturer TSMC, for the creation of custom generative AI models.

“Generative AI is driving the fast adoption of AI and reinventing countless industries,” said Huang. “Nvidia AI Foundations let enterprises customize foundation models with their own data to generate humanity’s most valuable resources – intelligence and creativity.”

Nvidia AI Foundations is a set of publicly hosted cloud services that helps enterprises create, customize, optimize and deploy custom models for generative AI applications. The services act as the aforementioned foundry for enabling enterprises to train these custom models with proprietary, domain-specific data.

There are three different modalities in AI Foundations. Manuvir Das, head of enterprise computing at Nvidia, said in a press briefing that this collection of services is made up of the same architecture and building blocks but each is optimized for different modalities. There is a brand new service called Picasso which is an image-to-text service for visual language that generates images, videos, and 3D models. The other two modalities consist of two previously-announced services, NeMo and BioNeMo. NeMo is a text-to-text modality to create and run large language models, and BioNeMo is a service used for biological research purposes such as generating protein structures.

Nvidia says each of the cloud services includes six elements: pre-trained models, frameworks for data processing, vector databases and personalization, optimized inference engines, APIs, and support from NVIDIA experts.

What’s Under the Hood

The AI Foundations platform leverages Nvidia AI Enterprise, the company’s AI suite, and is hosted on the Nvidia DGX Cloud, which the company touts as the fastest way to have your own DGX AI supercomputer by just opening a browser. Each instance uses eight H100 or A100 80GB Tensor Core GPUs with 640GB of GPU memory per node, and multiple instances tightly networked together. DGX Cloud provides dedicated clusters that are available to rent on a monthly basis.

The DGX Cloud is currently available through Oracle Cloud Infrastructure. Nvidia says the OCI Supercluster provides a purpose-built RDMA network, bare-metal compute and high-performance local and block storage that can scale to superclusters of over 32,000 GPUs. Services on Microsoft Azure and the Google Cloud platform will be available in the future.

Picasso

AI imaging systems like OpenAI’s DALL-E and Stable Diffusion have proven to be extremely popular services. Nvidia is jumping into the trend with Picasso, a new service that allows users to create images, videos, and 3D models through cloud APIs. Picasso can train Nvidia Edify foundation models using proprietary data to build apps with natural text prompts that create visual content. Example use cases are product design, digital twins, storytelling and character creation.

Copyright and licensing have been issues with generative AI image platforms, as many have been trained on data from the open internet. For businesses concerned with this, Picasso users can access pre-trained Edify models with fully licensed data.

This is an example of new generative AI capabilities in the Adobe creative suite, powered by Nvidia's Picasso service. (Source: Nvidia)

This focus on responsible image sourcing can be seen in partnerships surrounding Picasso, including those with Shutterstock, Getty Images, and Adobe. Nvidia is working with Getty Images to train their own text-to-image and text-to-video models using their repository of responsibly sourced images. Getty Images will be providing royalties to artists for revenues generated by these models.

Shutterstock is also working with Nvidia to generate text-to-3D models using their proprietary images. These models will soon be available in Shutterstock’s Creative Flow toolkit, along with its Turbosquid 3D modeling tool which is planned for integration with Nvidia’s Omniverse industrial metaverse platform. Only fully licensed Shutterstock assets will be used for training, and Shutterstock says it will compensate artists via its Contributor Fund.

Adobe founded the Content Authenticity Initiative (CAI) to develop open industry standards for establishing attribution and Content Credentials, according to a statement from Nvidia. CAI adds content credentials to content at the point of capture to pinpoint when content was generated or modified with generative AI. Adobe and Nvidia are among 900 members of the CAI.

Using Picasso, Adobe is training generative AI models with a focus on deep integration into its suite of creative applications like Adobe Firefly, a new family of creative AI models embedded in applications like Photoshop and Illustrator. Currently in beta, Firefly generates images and text effects that are safe for commercial use.

“Adobe and NVIDIA have a long history of working closely together to advance the technology of creativity and marketing,” said Scott Belsky, Adobe’s chief strategy officer and EVP for design and emerging products. “We’re thrilled to partner with them on ways that generative AI can give our customers more creative options, speed their work, and help scale content production.”

NeMo

Unveiled at GTC last September, NeMo supports the entire lifecycle of creating a custom LLM, from gathering training data, to model tuning and customization, and deployment. The suite of pre-trained models includes those in the GPT family and range from 8 billion to 530 billion parameters.

The models will be updated with fresh training data, and thanks to information retrieval capabilities, businesses can use real-time proprietary data to enhance their LLMs and boost use cases like market intelligence, enterprise search, chatbots and customer service. One example of this is a model called Inform, which was purpose-built for extracting information from the proprietary databases and in-house systems of enterprises.

Today’s announcement also brings a slew of partnerships, including Morningstar, a provider of independent investment insights that is using NeMo to research advanced intelligence services.

“Large language models offer us the ability to collect insightful data from highly complex structured and unstructured content at a larger scale while prioritizing data quality and speed,” said Shariq Ahmad, head of data collection technology at Morningstar. “Our quality framework includes a human-in-the-loop process that feeds into model re-tuning to ensure that we produce increasingly high-quality content. Morningstar is using NeMo in its data collection research and development on how LLMs can scan and summarize information from sources such as financial documents to quickly extract market intelligence.”

BioNeMo

Generative AI is enabling biological research, especially in the area of protein structure prediction and design. BioNeMo is a service offering AI model training and inference to accelerate research and drug discovery. The service was also introduced at GTC last September. It enables researchers to fine-tune generative AI apps on proprietary data, as well as run AI model inference and protein structure visualization directly in a web browser or APIs that can be integrated with existing applications.

“The transformative power of generative AI holds enormous promise for the life science and pharmaceutical industries,” said Kimberly Powell, vice president of healthcare at Nvidia, in a release. “Nvidia’s long collaboration with pioneers in the field has led to the development of BioNeMo Cloud Service, which is already serving as an AI drug discovery laboratory. It provides pre-trained models and allows customization of models with proprietary data that serve every stage of the drug-discovery pipeline, helping researchers identify the right target, design molecules, and proteins, and predict their interactions in the body to develop the best drug candidate.”

Previously announced models for BioNeMo include the MegaMolBART generative chemistry model, ESM1nv protein language model and OpenFold protein structure prediction model.

There are also six new open source models available in BioNeMo early access. AlphaFold2, developed by DeepMind, is a deep learning model that predicts the structure of a protein. DiffDock helps researchers learn how a drug molecule will bind with a target protein and predicts 3D orientation and docking interactions. ESMFold is a protein structure prediction model that uses Meta AI’s ESM2 protein language model to estimate the 3D structure of a protein based on a single amino acid sequence. ESM2 itself is available and can be used for inferring machine representations of proteins for tasks like protein structure prediction, property prediction, and molecular docking. MoFlow is a generative chemistry model for molecular optimization that creates molecules from scratch. Finally, ProtGPT-2 is a language model for generating novel protein sequences.

Among the early adopters of BioNeMo is biotech firm Amgen which is using the service to advance its R&D.

“BioNeMo is dramatically accelerating our approach to biologics discovery,” said Peter Grandsard, executive director of biologics therapeutic discovery at the Center for Research Acceleration by Digital Innovation at Amgen. “With it, we can pre-train large language models for molecular biology on Amgen’s proprietary data, enabling us to explore and develop therapeutic proteins for the next generation of medicine that will help patients.”

Availability

Picasso service is in private preview, and those interested can sign up to be notified when it is available. Currently, the NeMo service is in early access, as is BioNeMo, which has an application form at this link.