Graphcore Expanding Its AI Compute Platform Sales with Its First Channel Partner Program

AI compute platform vendor Graphcore has launched its first formal global channel partner program to promote and boost the sales of its AI processors and blade computing products.

The formalized, all-new Graphcore Elite Partner Program follows the company’s past history of working with several distribution partners to promote its AI products, including its Intelligence Processing Unit (IPU), via a less structured approach.

The company’s first 16 channel partners are Dell Technologies, 2CRSi, Atos, Boston Limited, BSI, Digital China, Inspur, Lambda, Macnica/Cytech, Meadowgate Technologies, Megazone, OCF, Penguin Computing, Tech Data Europe, Tech Data US, and Wildflower International. The partners include technology distributors and resellers, and additional partners are expected to be named later.

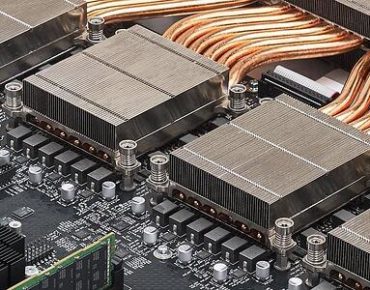

Graphcore’s AI products include its IPU-Machine M2000, a 1U plug-and-play Machine Intelligence compute blade that is hugely scalable and delivers one petaflops of machine intelligence compute, along with integrated networking and optimization for AI scale-out. The IPU-Machine M2000 is powered by four Graphcore 7nm Colossus Mk2 GC200 IPU processors and runs on the company’s Poplar software stack.

“This partner program validates Graphcore’s belief that customers and researchers working on leading-edge AI projects require a processor built from the ground up specifically for AI workloads,” Victoria Rege, the director of alliances and strategic partnerships for Graphcore, told EnterpriseAI. “With this network of some of the best known channel partners, technology distributors, and resellers, Graphcore can fulfill this demand, and help its customers to achieve the next breakthroughs in machine intelligence.”

The formal Elite Partner Program is good for customers because it demonstrates the depth of the relationships and commitments to providing high quality support, training and customer service for Graphcore’s products, she said. “Behind the program, a lot of work has been done to develop partner communications channels, resources, dedicated support on the Graphcore side and much more. This is the right point in Graphcore’s development to offer a formal partner program. We are at a critical mass of customers and crucially - of demand from potential customers.”

The program dramatically expands Graphcore’s earlier distribution network operations and comes at a time when its products are maturing, said Rege. “Our Mk1 products have been available now for at least a year, so benchmarks and customer case studies are showing the real-world advantage that the IPU offers over other AI compute technology, which is driving demand. The announcement of Graphcore’s Mk 2 IPU-M2000 system has further increased global interest in the company’s products.”

Through the fledgling partner program, many customers will gain the reassurance of working with partners they already know and work with today, she added.

Graphcore will be shipping its Mk2 IPU-M2000 systems in Q4.

‘Another Concrete Step Forward’

Karl Freund, senior analyst for machine learning and HPC for analyst firm Moor Insights & Strategy, called Graphcore’s move to create a global partner program a good one. “It’s another concrete step forward by Graphcore to establish and grow its distribution channels for the IPU, especially for the new IPU-Machine,” said Freund. “The flexible deployment model of the Graphcore appliance will be attractive to sellers and buyers alike.”

Graphcore, which was founded in 2016, has raised more than $450 million in in funding so far and is headquartered in Bristol, UK.

For scale-up AI compute, Graphcore’s IPU-POD64 comprises 64 IPU processors across 16 IPU-Machine M2000s, which can work in parallel or independently, serving multiple users and tasks. Larger, interconnected IPU-POD configurations of up to 64,000 IPU processors are capable of delivering up to 16 exaflops of compute for hyperscale users.