Fighting Financial Cybercrime: A Big Memory, Graph Analytics Strategy

Systems in the financial services industry generate multiple terabytes of data a day, a staggering volume to process in search of cyberthreats. Trovares, a high performance "property graph" analytics company with roots in supercomputing, has partnered with HPE and its Superdome Flex memory-driven servers on a cybersecurity capability the companies say “routinely” runs near-time workloads on 24TB-capacity systems, with scale up potential to 96TB of memory.

Trovares’ xGT software “was designed to scale to very large data sets that other vendors cannot address,” said CEO James Rottsolk, a co-founder and CEO (1987-2006) of supercomputing pioneer Cray Inc. (recently acquired by HPE). While conventional graph analytics tools post benchmarks up to two times faster than conventional tools, Trovares claims xGT property graph analytics handles billions of records that would cause conventional graph tools to stall.

Financial cyber threats include network malware intrusions that reside in data “kind of quiescent,” Rottsolk said, only later do they begin their work of extracting financial data to be used by cybercrooks. But searching through the daily data deluge for such threats is beyond the capabilities of conventional compute systems, leaving analysts to process only samples of their data sets.

“The large institutions deal with immense amounts of data and it continues to grow,” he said. “The tools they’ve been using for analysis for things like malware and network intrusion detection… don’t scale to address all those terabytes that they see each day. Rather than taking a small slice of that data, which means they’re likely to miss problems that spread across all the data, we’re able to ingest all that data and very rapidly answer complex data searches.”

“The large institutions deal with immense amounts of data and it continues to grow,” he said. “The tools they’ve been using for analysis for things like malware and network intrusion detection… don’t scale to address all those terabytes that they see each day. Rather than taking a small slice of that data, which means they’re likely to miss problems that spread across all the data, we’re able to ingest all that data and very rapidly answer complex data searches.”

Seattle-based Trovares had been funded by a research arm inside the U.S. Department of Defense since 2012 before announcing venture funding of $2 million and plans to enter commercial markets six months ago. The company joined with HPE under the latter’s Partner Ready Program, and the two companies have been developing the xGT-Superdome Flex capability jointly.

“We all know about the data growth situation,” said Bret Gibbs, HPE mission critical systems product manager. “It’s an emerging market…, we’re looking at several use cases, one of which is where you have data sets that approach the petabyte range, and the ability to load as much of that data into memory and analyze it and get near real time insights, that exhausts the capabilities of today's systems. So as that data growth continues and these businesses look to gain new insights and time-to-insight is a key metric, we’ll continue to evaluate that capability.”

In the FSI cybersecurity workload scenario, Gibbs said log analysis of network traffic is “an area rife with possible cyber threat patterns,” one that today is manually handled by data scientists. “What we’re trying to do is shrink the time window from when the potential threat happens to when it's detected, reduce the case of threat detection scan where you’re parsing these logs…. There’s a high cost to not checking all of your data, and the amount of data that has to be parsed is really conducive to large, in-memory compute.”

HPE’s Superdome Flex server line utilizes technology from SGI, which HPE acquired in 2016. Gibbs said the servers are scale-up systems starting as small as four sockets with 768 gigabytes of memory and up to 32 sockets with memory capacity of 48TB. He said scaling up, rather than scaling out, “avoids some of the latency issues introduced in a traditional cluster system, where you’re doing hops in between the compute nodes to get to the memory.” Communication within racks of four-socket servers is handled by the NUMAlink interconnect, developed SGI for its shared memory ccNUMA systems.

Gibbs said the development work with Trovares is part of HPE’s memory-driven computing strategy, in which memory, not processing, is at the center of the computing scheme. He said HPE is experimenting in its sandbox lab with connecting two racks of 32-socket Superdome Flex servers, for memory capacity of 96TB. “It’s not a production capability, we’re working with customers and our ISV partners on testing out the limits of scaling their applications to tap into this large memory and compute footprint, beyond the 32 sockets and beyond the 48TB that we have today.”

Rottsolk said he thinks the Trovares-HPE capability can scale virtually without limit. “We run routinely on 1152 cores on a Superdome Flex system that we use for a lot of benchmarking. We haven’t run on a 48TB system, but we don’t’ think it would be a problem at all. It’s highly parallel code that utilizes a number of supercomputing techniques, a lot of optimizations, only lightweight locking mechanisms and multithreading. We don’t see a problem of scaling to the larger systems HPE is talking about

He said xGT software is application agnostic, it can in a cloud or on prem. “We run on anything from your laptop on up, but given the fact that we like to take advantage of large memory in a system, HPE has some technology that we’ve found fits our product almost perfectly.”

He said xGT software is application agnostic, it can in a cloud or on prem. “We run on anything from your laptop on up, but given the fact that we like to take advantage of large memory in a system, HPE has some technology that we’ve found fits our product almost perfectly.”

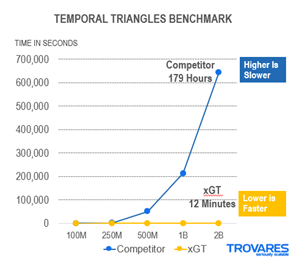

He said a property graph is an attributed, multi-relational graph in which data is organized as nodes, relationships and properties and in which both the edges and vertices can have any number of key/value properties associated with them. The xGT platform is a highly parallel graph analytics tool designed to maximize utilization out of all available processor. It uses a framework called “symmetric multiprocessor systems” to perform trillions of scans of in-memory graphs. That approach helps spot complex patterns, often in minutes, according to Trovares. On the Temporal Triangles Benchmark the company offered the benchmark results shown here, but didn’t identify the competitor.

“Graph analytics is growing as a tool in the market,” Rottsolk said. “Property graph is also growing, but the leading graph companies operate with smaller data sets and don’t scale up…. They can go up to a couple of hundred million edges, but they don’t get into the terabyte area easily.”