Attacking the AI Trust Gap: ‘FICO-like’ Risk Scoring for Machine Learning Models

source: CognitiveScale

Implementing machine learning is a minefield and a slog. Even after IT managers put in place an accelerated computing infrastructure required for AI, after data scientists and business managers agree on analytics projects the organization needs, after the data science team selects algorithms, builds models, prepares data, runs prototypes and makes everything operational – after all that –there’s still the real possibility business unit managers will reject ML recommendations for fear of bias in the model or simply because they don’t understand how the system arrives at its decisions.

It’s the AI Trust Gap, and it’s a particularly difficult hurdle for companies without FAANG-class compute and data science resources. We’ve written about new attempts to close the trust gap, including management strategy recommendations (“How to Overcome the AI Trust Gap: A Strategy for Business Leaders”) and a product launch last month by IBM (“Explaining AI Decisions to Your Customers: IBM Toolkit for Algorithm Accountability”).

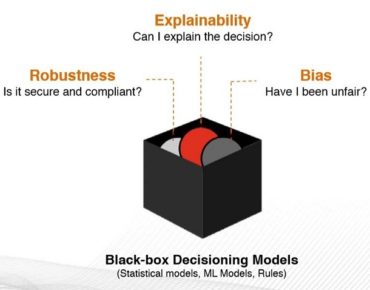

Now CognitiveScale has added Certifai to its Cortex line of enterprise AI software that generates, according to the company, a “FICO-like” composite risk score based on the “AI Trust Index” that CognitiveScale developed with AI Global. The Austin company said it’s the first AI vulnerability detection product that tests simultaneously for multiple factors: fairness, explainability, robustness, data rights and compliance.

Cortex Certifai “automatically detects and scores vulnerabilities in almost all black box models without requiring access to model internals,” the company said. Available as a stand-alone application and as a container-based Kubernetes application on major cloud platforms, CognitiveScale has worked on the for two-plus years under a development project with the Canadian Government. It’s now being implemented by Jackson National Life Insurance, a subsidiary of Prudential, Inc., and a “top five” global bank, according to Manoj Saxena, executive chairman of CognitiveScale.

“Jackson’s vision is to transform customer and advisor engagement with technology through a combination of APIs, platforms and ecosystems,” said Dev Ganguly, CIO, Jackson National. “We are excited to be working with CognitiveScale to ensure our AI systems have the right governance and controls around explainability, bias and robustness,”

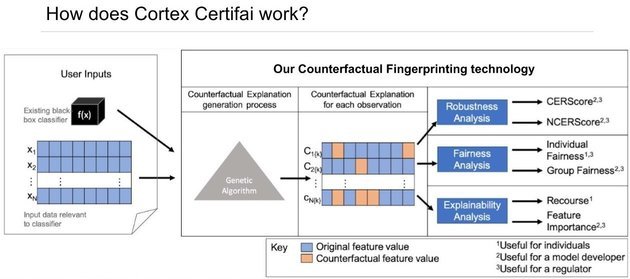

Drawing on a combination of data science techniques (genetic algorithm and “counterfactual explanations” to test for “boundary conditions”) Certifai avoids the need to access the ML model internals, a sensitive issue for customers who regard their models as precious IP.

Drawing on a combination of data science techniques (genetic algorithm and “counterfactual explanations” to test for “boundary conditions”) Certifai avoids the need to access the ML model internals, a sensitive issue for customers who regard their models as precious IP.

“We tell the customer: you don’t have to tell us how the model works, just gives us a data set that goes into the model and the outcome from the model,” Saxena said, “and by running those data sets in slightly different forms hundreds of thousands of times, in some cases millions of times…, we start generating a ‘fingerprint’ of exactly where the boundary conditions are where the model turns from being biased to unbiased or explainable to non-explainable.”

He said the AI Trust Index score is delivered in dashboard form so that end users of varying technical capabilities can access and drill into the results as they require. Certifai runs can take “six to eight hours or as many as tens of hours” depending on the compute horsepower used. “Since it’s a Kuburnetes application I can run this in the cloud and connect it into a GPUs or TPUs and reduce that time.”