Dell Z9500 Switch Pushes 40GbE Density Up, Costs Down

The increasing adoption of 10 Gb/sec Ethernet between servers and out to storage arrays is driving the need for more bandwidth higher up in the aggregation layer of the network. To many, 40 Gb/sec Ethernet switches are still too expensive, and all vendors are being pushed to deliver both 40 Gb/sec and 100 Gb/sec switches at something closer to the same price per bandwidth as a 10 Gb/sec switch.

In a few weeks, Dell will start shipping its Z9500 distributed core switch, a kicker the Z9000 box that the formerly independent Force10 Networks launched three years ago as its big splash for 40 Gb/sec Ethernet in the datacenter. The new switch has more ports, burns less power per port, and costs significantly less per port.

Arpit Joshipura, vice president of product management for Dell Networking, tells EnterpriseTech that ahead of the Z9000 launch three years ago, it cost on the order of $7,500 per port to buy a 40 Gb/sec switch. With the Z9000, which has 32 ports running at 40 Gb/sec in a 2U enclosure, the cost per port dropped to around $5,500 at list price and the street price was probably something on the order of $2,700 per port, according to Joshipura, if customers brought multiple vendors in on the deal. That Z9000 switch has two Trident+ ASICs from Broadcom as its main switching brain, plus some FPGAs to do other jobs; it has 2.56 Tb/sec of switching bandwidth, and up to eight of these can be configured into a spine and fed out to many leaf switches in the tops of server and storage racks to support as many as 24,000 servers linked together running at 10 Gb/sec with 3:1 oversubscription on the laves. This machine cost $175,000 at list price.

The new Z9500 that starts shipping in April comes in a 3U enclosure and has 132 ports running at 40 Gb/sec, a feat that is made possible by switching to multiple Trident-II ASICs from Broadcom plus a number of FPGAs to accelerate certain functions that need to remain malleable. Joshipura doesn't want to say how many Broadcom ASICs are in the box, but he did say that the machine has 10.4 Tb/sec of switching bandwidth and has a port-to-port latency that is as low as 600 nanoseconds to 1.2 microseconds, and that is bound to catch some attention. So is the port density per rack volume, which is nearly three times that of the Z9000 it replaces. With splitter cables, the Z9500 can pack 528 ports running at 10 Gb/sec in that chassis. Another interesting new feature with the Z9500 switch is a pay-as-you-grow option that lets customers activate 36, 84, or 132 ports with a golden screwdriver software upgrade.

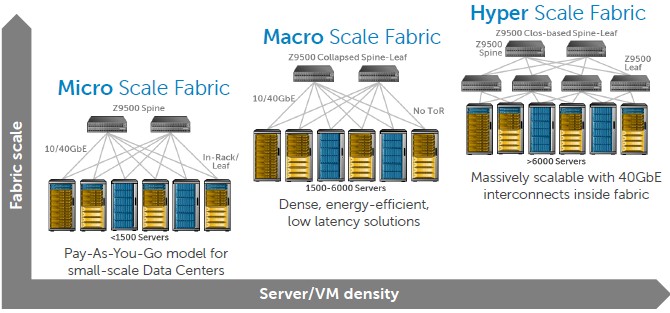

What Dell is really pitching with the Z9500 is the ability to start out small with a network and scale it up massively if need be. At what it calls a microscale fabric level, with fewer than 1,500 servers in the network, you have two Z9500s acting as a spine and you put leaf switches in the top of rack. If you want to go what Dell calls macroscale, which is somewhere between 1,500 and 6,000 servers, and assuming you have low latency needs, you put multiple Z9500s in the network, using them as a collapsed leaf-spine network. You can use a pair of Z9500s as a spine and then use four other Z9500s as leaves in what is called a CLOS-based leaf-spine network, and with a 3:1 oversubscription ratio, that gets you to above 6,000 servers.

The CLOS-based leaf-spine setup can, in fact, scale up to 100,000 servers in a single network, as Dell has done for existing customers (which it will not name, but they are cloud providers). The number of switches needed depends on the oversubscription ration moving from the spines into the leaves. Depending on the customer, hyperscale networks typically have oversubscription rations that range from 6:1 to 10:1, but Dell says it can get it down to 3:1 in a cost-effective manner.

"The reason is that a lot of the traffic on the network is east-west and it is not user traffic, meaning you don’t have TCP retransmits that cause the packets to be lost due to blocking," Joshipura. "This is just server-generated traffic like Memcached, Hadoop, or virtual desktop infrastructure. The virtualized fabric is expecting the Layer 3 fabric to be connected anyway, and there is a lot of pressure to reduce the oversubscription. It depends on how much excess capacity you want in the network for rack growth."

The Z9500 will be priced at the market level, which is around $2,000 per 40 Gb/sec port at list price for the hardware. In a base configuration with Active Fabric Controller software included, that comes to $136,000. (This is presumably for a Z9500 with 36-ports activated.) That is list price, of course. It is not uncommon to see a 50 percent discount in competitive deals. A 10 Gb/sec port has a list price of between $1,000 to $1,200 on the street today, and on the street it is about $500 per port for a bare-bones chassis. The point is that Dell is offering four times the bandwidth at twice the price per port, and that is a reasonable deal. Given this, in a lot of cases it will make sense to buy the 40 Gb/sec switches now and use cable splitters if you really only need 10 Gb/sec ports right now. You get that 10 Gb/sec port at a cost of around $250 this way.

On the software front, Dell is kicking out the first release of its Active Fabric Controller software. This software, which runs on an appliance on an X86 server, has been created to interface with the OpenStack cloud controller and its "Neutron" virtual networking plug-in. Active Fabric Controller discovers the network elements and its topology and can do a zero-touch deployment finding all of the Layer 4 through 7 services on the network. It is not aimed at network admins so much as cloud admins who want to virtualize networks and expose services over the fabric to systems and applications. Active Fabric Controller 1.0 will also be available in April; pricing has not been set as EnterpriseTech goes to press.

"We are seeing three stacks emerging: The Microsoft stack, the VMware stack, and the OpenStack stack," says Joshipura, and he wanted to be clear of the distinction between Active Fabric Manager and Active Fabric Controller. Dell announced integration with VMware's ESXi hypervisor, vCenter management console, and NSX virtual networking last summer with the launch of Active Fabric Manager, which is a tool for network admins to automate network deployments and manage physical and virtual networks. Dell is working with Microsoft to extend Active Fabric Manager to the Windows Server, Hyper-V hypervisor, and System Center console. It is not clear if Dell will do an Active Fabric Controller for VMware or Microsoft environments.