Facebook Pushes Future of Efficient Datacenters and Apps

When the data center is literally the engine for your business, as it is with Facebook, Google, Yahoo, Amazon, and a slew of hyperscale data center operators, you have to do everything you can to try to make the data center and the applications it supports more efficient. Every penny saved is another penny that drops to the bottom line.

Facebook is a relatively young company, and given that it has experienced exponential growth over the past decade to reach its 1.15 billion users worldwide, it has had to take a much more proactive approach than the typical large enterprise. That said, Facebook has many lessons to teach the IT departments of the world, and it has decided, unlike Google and Amazon, to share many of the ideas behind its software and hardware engineering to help others become more efficient.

The Open Compute Project, founded three years ago to open source its server, storage, and data center designs is one example of The Social Network's openness. And so is a recent report, issued through the Internet.org consortium that Facebook started up with Ericsson, MediaTek, Nokia, Opera Software, Qualcomm, and Samsung back in August. All of these companies – and many others to be sure – want to bring access to the Internet to the 5 billion people who do not have that access today. And, as the Internet.org report makes very clear, that cannot happen with the current state of data centers, networks, and end user devices because of power and cost constraints.

"While the current global cost of delivering data is on the order of 100 times too expensive for this to be economically feasible, we believe that with an organized effort, it is reasonable to expect the overall efficiency of delivering data to increase by 100x in the next 5–10 years," the report, which was written by Facebook, Ericsson, and Qualcomm, states hopefully.

The reason that Facebook has hope is due to the pretty impressive progress it has had in a few short years to make its data centers cost less money (in terms of infrastructure costs as well as operational costs) and its applications run more efficiently. This being EnterpriseTech, the advances in server, storage, and data center design are front and center, but as Facebook correctly points out, the hard work that it has done in creating software infrastructure to boost the speed of the PHP software that is the front end for its social application as well as the major tweaks it has done for Hadoop and other parts of its back-end stack are equally important. While it is important to be optimistic, it is also necessary to admit that Facebook has perhaps found the low-hanging fruit, and getting a 100X increase in the effectiveness of delivering data over networks out to end user devices is going to take an enormous among of engineering and talent.

This is precisely why Facebook created the Open Compute Project and is a member of the Internet.org consortium. It knows that it cannot do this alone, any more than any one vendor is going to be able to get an exaflops supercomputer together that can fit in a 25 megawatt power envelope. In fact, the same approaches to get to exascale may be needed for Facebook to reach its goals. Or, even more ironically, the things that Facebook and other hyperscale Web application operators come up with to scale to billions of users may be the foundation of technologies that get commercialized as exascale supercomputers.

Stranger things have happened in the IT sector.

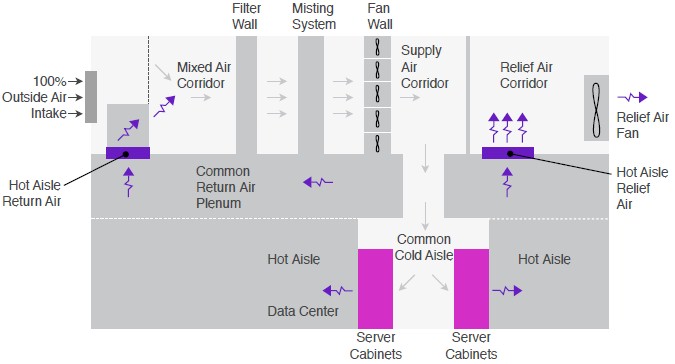

Facebook is quite proud of its three data centers, which have delivered increasing efficiency at both the server and center level. Its first data center, in Prineville, Oregon, was 38 percent less expensive than the co-location facilities it was operating at before the homegrown data center was opened in April 2011. The data center was also 24 percent less expensive. Facebook has another data center in Forest City, North Carolina and its latest is in Lulea, Sweden. All three centers have a power usage effectiveness – the ratio of the energy brought to the data center divided by the energy used to power the servers, switches, and storage – on the order of 1.04 to 1.09. These centers use outside air to cool the IT equipment instead of chillers and water towers as used in conventional data centers, which are sealed up tighter than a drum. And by the way, the typical data center has a PUE of around 1.9, which means it takes nearly twice as much power to run it as it does to do the IT work. Facebook designs its machines to have components that are rated to run at higher temperature and humidity, and even when outside air cannot cool the gear, it can use misters or other wetted media to cool off the air through evaporation before it is pumped down to the equipment racks for cooling:

The data center design allows the Prineville center to only need evaporative cooling about 6 percent of the time in a year, and because the Lulea center is near the Arctic Circle, it only needs it about 3 percent of the time.

Things like making servers taller than standard rack units and then cramming three in side-by-side-by-side and taking all of the metal and plastic off the front and back to improve airflow also cuts down on energy requirements and improves cooling. A fan in an Open Compute design by Facebook is bigger, and that means it can rotate slower to move the same amount of air and that then means it burns less juice. A fan on a Facebook server burns from 2 to 4 percent of the total power of the system compared to 10 to 20 percent for a commercial server.

The lesson is, it all adds up.

And that is why Facebook, through Open Compute, is now involved in building its own switches and software defined-networking stack as well as making improvements to the OpenStack cloud controller and its related Swift storage software, the Linux operating system, the Hadoop Distributed File System, and rack designs that seek to break systems and racks apart and connect them through silicon photonics. The idea of that last bit is to move towards a world where compute, memory, storage, and I/O are broken up instead of all welded to the same motherboard, thereby making each component independently upgradeable. Facebook also has a hand, through its Group Hug chassis, in creating a new standard for microservers based on X86 and ARM system-on-chips.

While your resident EnterpriseTech motorhead loves iron, Facebook was very keen to make sure everyone knows that software has to be made more efficient as well so it takes less hardware to execute it and data has to be stored, encoded, and passed around in more efficient ways, too. Everything is bound up.

To make the point, the Internet.org report shows the evolution of Facebook's Web application, which was originally coded in PHP, an interpreted language that is both popular for Web apps and relatively easy to learn. What works on a single application server running at Harvard University doesn't work when you have millions, then hundreds of millions, and then over a billion users. So in 2010, Facebook wrote a tool called HipHop, which converts PHP to highly optimized C++, which allowed a server to process 50 percent more work than a server running a regular PHP engine. But HipHop did static compilation, which made tuning it difficult and time consuming. And so the software engineers went back to the drawing board and a year created the HipHop Virtual Machine, which dynamically translated raw PHP code down to machine code, and this yielded a 500 percent increase in performance over raw PHP.

Only large enterprises like Facebook who make tens billions of dollars of revenue from their hard and soft wares can afford to do such development. The good news is that Facebook is doing this investment, and that the Open Compute designs and the HipHop tools have been open sourced. So has the Cassandra NoSQL data store that Facebook created and a number of other tools that have significantly improved the performance of Hadoop and its MapReduce algorithm.

Facebook is not sharing just to get good public relations. It is sharing because it needs its peers and enterprises like yours to help push the power envelope down on data centers, servers, and devices.