Databricks’ $1.3B MosaicML Buyout: A Strategic Bet on Generative AI

Databricks is the latest company to place a large bet – to the tune of $1.3 billion – on generative AI. On the first day of its sold-out Data + AI Summit, the company announced a definitive agreement for the acquisition of MosaicML, a generative AI startup and OpenAI competitor.

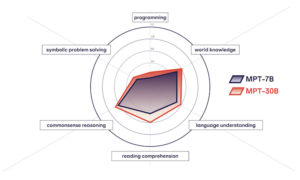

MosaicML is the creator of MPT-7B, an open source, Apache 2.0-licensed foundation model that the company claims has been downloaded 3.3 million times since its release in May. A more advanced model called MPT-30B debuted just last week. The company says MPT-30B is equal in quality to OpenAI’s GPT-3 but has a smaller footprint – the model was trained with only 30 billion parameters versus GPT-3’s 175 billion parameters. In addition to the base models, the company offers several variants focused on specific use cases, including models fine-tuned for chatbots, short-form instruction, and story writing.

Databricks says the entire MosaicML team, including its research team of ML and neural networks specialists, will join Databricks at the close of this transaction. The MosaicML platform will be scaled and integrated into the Databricks Lakehouse Platform over time, Databricks noted in a release.

Earlier this year, Nvidia CEO Jensen Huang called generative AI the “iPhone moment of AI” and many organizations are weighing their options for how to best leverage this technology while retaining control of their data. Enterprise use cases for generative AI often require high levels of accuracy due to regulatory requirements, which is something foundation models alone cannot guarantee, and building proprietary models using company data while maintaining control, security, and ownership over data is a goal for many businesses.

MosaicML recently compared its latest foundation models in six core capabilities, finding that MPT-30B significantly improves over MPT-7B in every respect. (Source: MosaicML)

MosaicML Co-founder and CEO Naveen Rao said in a statement that MosaicML was launched to solve the difficult engineering and research problems necessary to make large-scale training more accessible to everyone, an initiative made all the more critical with the recent generative AI wave.

“At MosaicML, we believe in a world where everyone is empowered to build and train their own models, imbued with their own opinions and viewpoints – and joining forces with Databricks will help us make that belief a reality,” said Rao.

MosaicML’s customers include the nonprofit research institute Allen Institute for AI and Generally Intelligent, a developer of general-purpose AI agents. San Franciso-based IDE Replit and healthcare chatbot developer Hippocratic AI are also customers of the platform.

Databricks says MosaicML’s generative AI capabilities combined with the Databricks Lakehouse Platform will provide a robust and flexible platform geared toward large enterprises with a broad range of use cases. Tamping down the high costs of training AI models is another factor: “According to MosaicML, automatic optimization of model training provides 2x-7x faster training compared to standard approaches. Combined with near linear scaling of resources, multi-billion-parameter models can be trained in hours, not days. With Databricks and MosaicML, training and using LLMs will cost thousands of dollars, not millions,” the company said in a statement.

“Every organization should be able to benefit from the AI revolution with more control over how their data is used. Databricks and MosaicML have an incredible opportunity to democratize AI and make the Lakehouse the best place to build generative AI and LLMs,” said Ali Ghodsi, co-founder and CEO of Databricks. “Databricks and MosaicML’s shared vision, rooted in transparency and a history of open source contributions, will deliver value to our customers as they navigate the biggest computing revolution of our time.”

This article originally appeared on sister site Datanami.