Kneron Unveils Its First RISC-V SoC Built for Autonomous, Assisted Driving

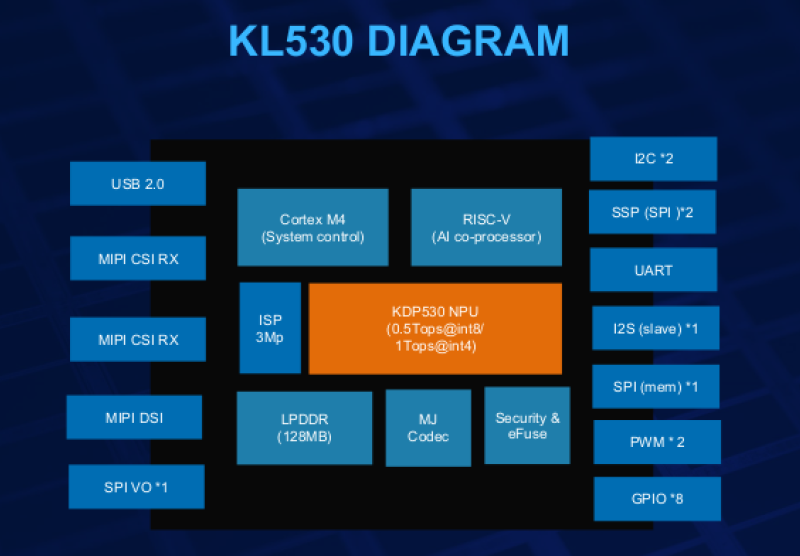

AI chip vendor Kneron has announced its most advanced chip so far – its Kneron KL530 which is the company’s first product to include an image signal processor and a RISC-V instruction set to ready it for powering L1 and L2 autonomous applications in vehicles.

The latest Kneron SoC also supports Vision Transformer AI models, which are up to 30 percent more accurate than traditional Convolutional Neural Network (CNN) models for continuous frames, according to the company. Vision Transformer models are necessary to advance beyond L2 autonomous driving because they can make inferences holistically, rather than creating inferences based just on key features that differentiate information, which is done when using CNNs. That can lead to significant safety increases for autonomous vehicles and their applications through highly accurate hazard detection, the company said.

The KL530, which was unveiled by Kneron on Nov. 3 (Wednesday), is its first that is built on the open source RISC-V instruction set and beats out its predecessor chip, the KL520 with improved energy efficiency, according to the company. The KL530 boasts up to 2x more TOPS per watt over the KL520 and provides up to 10 times better than the KL520 using key AI models such as MobileNet and ResNet-50. The KL520 was unveiled in 2019.

The KL530 includes an ARM Cortex-M4@400MHz CPU for system control and delivers 0.5 TOPS peak throughput in 8-bit mode. Maximum power consumption is under 500 milliwatts.

The performance improvements came through the addition of INT4 data support, which reduced processing time by 66 percent and doubled video framerates, and by reducing boot times by 33 percent while increasing the number of objects that can be detected from three to eight in the same cycle, according to Kneron.

The KL530 is the first certified automobile-grade Kneron chip and is a steppingstone for the company to get more deeply into the automobile market, Albert Liu, the company’s founder and CEO, told EnterpriseAI.

Aimed at advanced driver-assistance (ADAS) and autonomous driving systems, the KL530 follows Kneron’s KL520 and KL720 chips. Setting the KL530 apart from the earlier chips are its construction that meets automobile-grade reliability requirements as well as its new RISC-V instruction sets and other new features, said Liu.

The latest KL530 chips are being sampled by some early customers, said Liu, but none are yet in production.

“Design-in and design-win always take around six to eight months to become products on the market,” which is normal for the whole industry, said Liu.

The new image signal processor (ISP) on the KL530 is the second major processor on the SoC, enabling up to 1080p image processing in High Dynamic Range (HDR), as well as AI tuning to optimize images captured for machine learning applications. Also included is 3D recognition which allows blind spot detection, object/hazard recognition and classification, and distance measurement.

The KL530 will be released in two versions: an aftermarket version that will enable L0 vehicles to gain L1 or L2 autonomous driving capabilities, and a second version with an auto-grade qualification by design guarantee, which will be built directly into vehicles.

The KL530 will also be used for products within the Artificial Intelligence of Things (AIoT) through Kneron’s Kneo private and secure AI edge networks.

The KL530 will also be used for products within the Artificial Intelligence of Things (AIoT) through Kneron’s Kneo private and secure AI edge networks.

The KL530 chips will be used in several new electric vehicles announced in mid-October by Foxconn, according to Kneron.

Kneron has raised more than $100 million in funding from investors including Horizons Ventures, Alibaba, Qualcomm and Sequoia.

Karl Freund, the founder and principal analyst with Cambrian AI Research, told EnterpriseAI that Kneron still has work to do to prove its new chips.

“Like all startups, it sounds good, but the devil is in the details, which I have yet to see,” said Freund. “Also, INT4 is a twinkle in AI’s eye [but] people are still moving to INT8, and some skepticism about accuracy slows sales as all customers will need to verify accuracy on their models.”

In addition, there is already competition in the marketplace for the latest Kneron chips, he said. “I would point out that Nvidia already combines CPU, DSP, Deep Learning Accelerators (DLA), and GPU on the Orin Drive platform for Level 2 and 3.”

Another analyst, Dan Olds, the chief research officer for Intersect360 Research, said the new chip looks to be much more powerful than the KL520.

“At up to 10x greater performance in AI tasks versus their previous generation and using roughly half the power, that is some improvement,” said Olds. “At the same time, they have nearly tripled – from three to eight – the number of items that can be identified in the same cycle. This is a huge advance for autonomous vehicle AI, and it is also great news for the RISC-V folks. This open source instruction set has generated a lot of buzz in processor circles and it is great to see it being used in new products like the KL530.”

Based in San Diego and founded in 2015, Kneron specializes in SoC AI chipsets, on-device AI algorithms and neural processing units (NPUs). The AI SoCs are used in applications such as supporting high-definition video, natural language processing and improved facial and audio recognition. They can also help accelerate neural network models used in visual and audio recognition applications. The startup’s AI algorithms target machine learning applications that operate with limited memory capability, including facial and gesture recognition.

Kneron’s NPU technology is aimed at edge applications with complex computational requirements while reducing power consumption. The goal is balancing power consumption along with memory and storage requirements while maintaining performance at the network edge.

The company also promotes its AI approach as “reconfigurable,” allowing AI-based edge devices, for example, to switch from audio to visual recognition tasks in real time. That capability is based on compatibility with widely used AI frameworks such as TensorFlow and PyTorch as well as convolutional neural network models like ResNet.