Google Leverages Container Expertise On Its Cloud

Google may not be the first of the major public cloud operators to support Docker containers, but it aims to provide the best support for the popular container format. Docker, a lightweight virtualization technology that leverages many innovations that are inspired by Google itself, has taken Linux systems by storm and is coming to Windows in the future. It will not be long before Docker becomes the standard for packaging up modern applications, and that means the container is the new battlefield on the cloud.

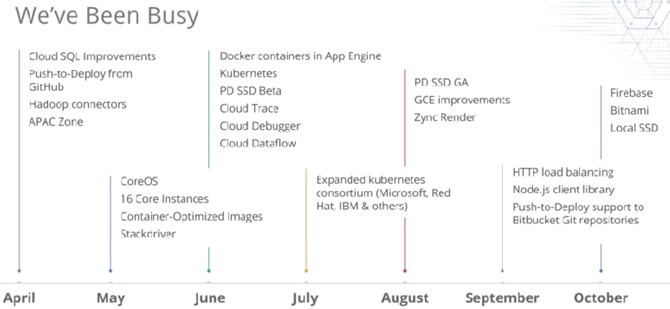

This was certainly the message from the search engine giant as it hosted its second Google Cloud Platform conference in San Francisco. Like rivals Amazon Web Services and Microsoft Azure, Google treats its infrastructure and platform cloud services as a single application platform and believes that it warrants the same kind of focused attention that was previously given for physical systems or significant components such as databases or Java. In fact, one of the key themes at the latest live event is that the boundaries between infrastructure and platform clouds are smearing.

Brian Stevens, the long-time chief technology officer at commercial Linux distributor Red Hat, took the job as vice president of product management for cloud platforms at Google eight weeks ago, and this was his public debut at a major Google event.

Contain Your Enthusiasm

Stevens reminded everyone that container technology was nothing new to Google, and that in fact Google contributed is control groups, or cgroups, code to the upstream Linux kernel back in 2008. Google had some rudimentary application containment in its own Linux infrastructure since 2004, and with cgroups it could allocated resources for single processes running atop Linux and provide quality of service, checkpointing, and chargeback mechanisms for those processes as they ran in containers. As EnterpriseTech has previously detailed, Google runs all of its software infrastructure inside of containers to provide resource isolation, and on Google Compute Cloud, its infrastructure cloud, the KVM hypervisor runs on top of that and allows for Linux and Windows instances to run inside of this container. The idea is to control resources through cgroups and provide that extra layer of security and control with KVM on the shared cloud to keep workloads isolated. Google doesn't say how many containers it is running in its global infrastructure, but it does say that it fires up 2 billion containers a day, a number that Stevens reiterated.

Like the advent of X86 systems and Linux in the late 1990s, server virtualization in the early 2000s, and cloud in the late 2000s, containers represent another paradigm shift for datacenters, and one that will separate the new generations of clouds and their applications from the current style.

"All too often, great technology projects are fun, but the best part about a technology project is when you can put it in the hands of users and make some kind of profound impact on what they are able to do," said Stevens. "Business to business technologies can focus on technology for technology's sake, but the thing that consumer companies have really figured out is how to focus on the user. And at Google, I think we have the best of both worlds: great technology and a focus on the user."

With the first generation of cloud platforms, Stevens correctly observed that companies are still doing the same thing as they were with physical platforms, and that is starting with a server and loading it up with a software stack. Instead of physical machines, we have virtual ones, but the processes to set infrastructure up are essentially the same even if the resulting virtual infrastructure does provide benefits in terms of higher server and network utilization, greater compute density, lower costs, more flexibility, and some disaster recovery.

"I think that the generation one of the public cloud is fantastic, but it is largely been about moving a virtual machine into somebody else's datacenter," Stevens continued. "Today's cloud is largely the externalization of the existing IT department and on premise infrastructure and you are still dealing largely with the same set of primitives and asking the same set of questions: what size server do you need, how much memory should I have, how fast should it be, how much disk space. That said, the first generation of cloud does offer some benefits – you can provision a system with a set of APIs instead of a purchase order." Even with the benefits of elasticity and multitenancy, which drive down costs, the model is still the same. "We want to be able to change what our users are able to do, not just where they are doing it."

Containers, as Google has learned from first-hand experience, are the key technology that will move the cloud ahead, and it is something that Google arguably has more experience with than any other organization in the world – and certainly at hyperscale. With a container, where it is LXC Linux containers, or the combination of cgroups and namespaces that Google uses internally, or Docker, which Stevens said was "quickly becoming the de facto representation of what a container file looks like." That does not necessarily mean that Google will use Docker containers internally, but it does mean that it will be enthusiastic about Docker on the Compute Engine infrastructure cloud and, as it turns out, on the App Engine platform cloud, too.

Google took its smarts from its Borg and Omega internal management systems and created the Kubernetes project back in June, and in July got some big backers to endorse it as a method of managing collections of Docker containers, including Microsoft, IBM, Red Hat, Docker, Mesosphere, SaltStack, CoreOS, and VMware. Why would Google assemble such a presumably contentious team of IT vendors to get behind Kubernetes? Because Amazon Web Services has supported Docker containers on its EC2 infrastructure atop its homegrown Xen hypervisor and under the direction of its Elastic Beanstalk management system since April. Google would rather have the industry united against AWS and supporting an open standard rather than have to adopt AWS methods in its Cloud Platform. Ditto for Microsoft Azure, and while we are at it, Rackspace (which supports Docker containers in a fashion) and IBM SoftLayer (which does as well).

Kubernetes, which in Greek means pilot, is used to manage Docker containers on Compute Engine and the Managed VMs – a kind of hybrid container – in App Engine. The containers are podded up and managed as collections, much as we presume Google does internally to mix workloads across its 1 million+ server infrastructure. And as Greg DeMichillie, director of product management for Cloud Platform, put it: The datacenter is not a collection of computers, but rather the datacenter is the computer, and clusters running containers are the finest grain anyone should have to deal with. Kubernetes orchestrates containers and allows users to define a logical cluster and then it takes care of scheduling applications on particular machines based on a quality of service. It is, in essence, a job scheduler for containers that is aware of the dependencies between containers.

Google Container Engine, which is abbreviated GKE just to keep it distinct from the infrastructure cloud at Google, is the commercial version of Google Compute Engine with Docker containers and the Kubernetes management service layered on top of it. Container Engine is in alpha testing now, and the service rides on Compute Engine so the underlying KVM virtual machines (which again are running atop cgroup containers) are all automagically provisioned and managed. Google has not revealed pricing, but you can sign up for a trial account to play with it. DeMichillie did not say when Container Engine would be generally available.

The Managed VMs service for App Engine is moving from alpha to beta and now has auto-scaling support as well as support for running Docker containers alongside App Engine runtimes. Managed VMs is a hybrid approach that runs App Engine applications atop Compute Engine VMs that users are allowed to configure and that Google manages on their behalf. The Managed VMs offer more CPU and memory capacity than standard App Engine slices, says Google, and can run Java, Python, and Go runtime environments as well as ones that customers can create themselves to, for instance, run C or C++ applications or to add third-party frameworks or runtimes.

DeMichillie said that Compute Engine was getting a bunch of new features as well as price cuts. Local SSD support is now going to be an option for Compute Engine, designed specifically for NoSQL data stores, databases, and other workloads that want the high I/O operations per second of flash devices but which cannot make-do with 10,000 to 20,000 IOPS that the flash-based network SSD units that Google offers on its cloud. The Local SSD option will be available on any Compute Engine machine type – you don't have to pick a particular kind of machine; capacity will range from one to four 375 GB flash partitions and with four partitions, you can get 680,000 random reads and 360,000 random writes. This is well above what other public cloud providers are doing, says DeMichillie, and he called the cost of 21.8 cents per GB per month a "very attractive" price.

Compute Engine now also adds support for the Ubuntu Server variant of Linux from Canonical, the last of the major Linuxes not supported on Google's cloud, joining Red Hat Enterprise Linux, openSUSE (but not SUSE Linux Enterprise Server), CentOS, CoreOS, Debian, and SE Linux. FreeBSD and Windows Server are also supported as operating systems atop Compute Engine's KVM slices. Ubuntu 14.10, 14.04 LTS, and 12.04 LTS are all supported.

Compute Engine's autoscaling feature is also moving from alpha into beta testing, and as the name suggests will allow users to set performance parameters or other metrics to automatically scale the number of VMs running an application up and down as the workload fluctuates throughout the day.

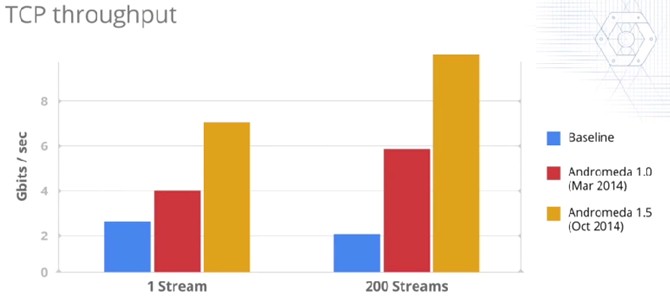

On the networking front, Google says that it has boosted the performance of its internal "Andromeda" software-defined network, boosting its TCP throughput significantly, as you can see below:

DeMichillie said that Google was also cutting pricing for pumping data out of its datacenters into its points of presence in the Asia/Pacific region by 47 percent, making it cheaper to serve customers in that important part of the globe. (Google already cut prices on Compute Engine VMs by 10 percent a month ago, and today cut prices on BigQuery storage by 23 percent, on persistent disk snapshots by 79 percent, on persistent disk SSDs by 48 percent, and on large Cloud SQL instances by 25 percent.)

Other networking enhancements include three new ways for customers to link into the Cloud Platform from the outside, collectively known as Google Cloud Interconnect. The VPN Connectivity service uses IPsec authentication and encryption to create a secure tunnel over the Internet between the corporate datacenter's network and Google's network. It will be in alpha testing in a few weeks and be generally available in the first quarter of 2015.

For customers who want a dedicated, permanent connect, Google will offer Carrier Connect through a set of partners including Equinix, IX Reach, Level 3, TATA Communications, Telx, Verizon, and Zayo. For the large customers who operate their own network (like Google does), Google will offer Direct Peering, coming in through over 70 points of presence in 33 countries without having to go through any intermediaries. DeMichillie said that AWS had only 13 such points and Azure only 11, for comparison.