How “MLonCode” Could Disrupt Software Development

The recent acquisitions of Tableau by Salesforce for $15.7 billion and Looker by Google for $2.6 billion reinforce the notion that data is the new printing press, coal and oil enabling the next wave of data science and AI innovations.

Just as businesses audit their financial statements, processes and assembly lines, it is equally critical to do the same with software portfolio, development processes and engineering talents. Large companies have to manage several billions of lines of code, along with many applications, engineers and IT guidelines that are constantly changing. The volume and complexity of these data sources represent a fascinating challenge for data scientists and machine learning engineers.

By applying ML to source code (MLonCode) and other engineering artifacts (tickets, CI logs, binaries, Docker images, etc.), organizations have an opportunity to improve software quality, security and development speed. Many approaches to MLonCode problems run aground on the so-called Naturalness Hypothesis (Hindle et.al.):

“Programming languages, in theory, are complex, flexible and powerful, but the programs that real people actually write are mostly simple and rather repetitive, and thus they have usefully predictable statistical properties that can be captured in statistical language models and leveraged for software engineering tasks.”

This statement justifies the usefulness of Big Code: the more source code that’s analyzed, the stronger the statistical properties emphasized and the better the achieved metrics of a trained ML model. The underlying relations are the same as in, for example, state-of-the-art natural language processing (NLP) models: XLNet, ULMFiT, etc. Likewise, universal MLonCode models can be trained and leveraged in downstream tasks.

Let’s take a closer at some enterprise use cases for MLonCode.

Expertise Assessment

Expertise is defined by the level of knowledge and experience in specific technology areas, such as security, quality and others. By using modern ML models and language analysis techniques, such as topic modeling, dependency analysis, code contribution and ownership, companies can quickly identify individual developers' expertise within large engineering organizations. As a result, software development projects, code reviews or technical decisions can quickly be assigned to the right people based on data rather than subjective feelings.

Collaboration Assessment

As major consumers and producers of open source software, many large companies are embracing a new set of engineering practices and culture based on collaboration. Using the same methodology to develop proprietary products internally is a popular engineering discipline called Inner Source. Although important, measuring effective collaborations at scale is not an easy task.

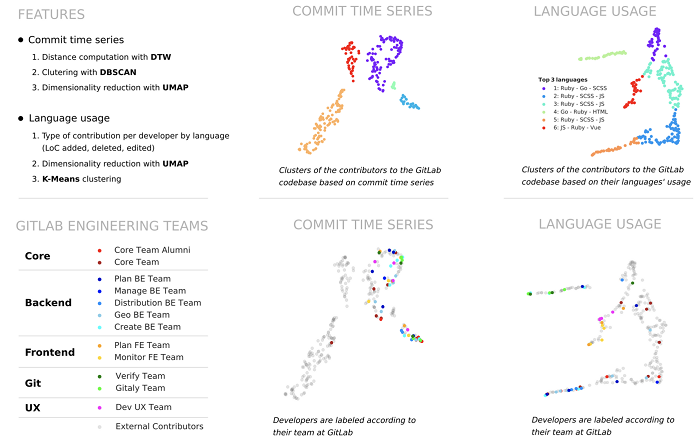

To overcome this challenge, ML researchers have looked at the topological structure of commit activity, usage of programming languages and topics of source code identifiers, along with modern clustering techniques for visualization. All the details are included in this research paper based on the gitlab-org open source projects (figure below).

Similar & Duplicate Code Analysis

Similar & Duplicate Code Analysis

Code clones can be measured at the file, function and snippet levels. According to the conventional classification, they spread over four types:

- Type-1 (textual similarity): Identical code fragments, except for differences in white-space, layout, and comments;

- Type-2 (lexical, or token-based, similarity): Identical code fragments, except for differences in identifier names and literal values;

- Type-3 (syntactic similarity): Syntactically similar code fragments that differ at the statement level. The fragments have statements added, modified and/or removed with respect to each other.

- Type-4 (semantic similarity): Code fragments that are semantically similar in terms of what they do, but possibly different in how they do it. This type of clones may have little or no lexical or syntactic similarity between them.

Each subsequent clone type is harder to detect than each previous one. It is exceptionally hard to detect type-4 clones because, while sharing the same intent, such code units are written independently of each other and require deep, human-like comprehension. Luckily, the Naturalness Hypothesis described previously continues to hold, and the program semantics are often captured by the natural language elements in code (how developers choose to name entities, for example), and its structure.

As more large businesses become tech companies buried in billions of lines of code -- and as thousands of applications, engineers and IT guidelines constantly change -- organizations have a unique opportunity to improve software quality, security and development speed. MLonCode gives these organizations a unique opportunity to streamline code and processes, better understand the organization’s strengths and weaknesses, and substantially reduce inefficiencies.

Eiso Kant, co-founder and CEO of source{d}, is a self-taught developer with a passion for neural networks and functional programming.