Tesla Reveals More Design Details of Its Modular ‘Dojo’ Supercomputer & D1 Chip

Two months ago, Tesla revealed a massive GPU cluster that it said was “roughly the number five supercomputer in the world,” and which was just a precursor to Tesla’s real supercomputing moonshot: the long-rumored, little-detailed Dojo system. “We’ve been scaling our neural network training compute dramatically over the last few years,” said Milan Kovac, Tesla’s director of autopilot engineering. “Today, we’re barely shy of ten thousand GPUs. … But that’s not enough.”

Enter Dojo, the design for which was revealed during the event, along with the design for its constituent D1 chip.

Dreaming of Dojo

“There’s an insatiable demand for speed, as well as capacity, for neural network training,” said Ganesh Venkataramanan, Tesla’s senior director for autopilot hardware. To that end, a few years ago, Tesla CEO and co-founder Elon Musk asked Venkataramanan’s team to build the company a “super-fast” training computer, aiming to achieve the best AI training performance, enable larger and more complex neural net models and achieve both power efficiency and cost efficiency.

“For Dojo, we envisioned a large compute plane filled with very robust compute elements, packed with a large pool of memory, and interconnected with very high-bandwidth and low-latency fabric,” Venkataramanan said. “We wanted to attack this all the way – top to bottom of the stack – and remove all the bottlenecks at any of these levels.”

The D1 chip & training tile

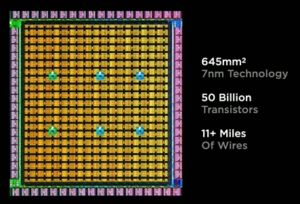

The aforementioned precursor cluster relied predominantly on Nvidia’s A100 GPUs for acceleration. Not so for Dojo, which will consist almost entirely of Tesla’s decidedly unique D1 chip. D1, Venkataramanan said, supported FP32, BFP16 (aka bfloat16 or brain floating point) and a new format called CFP8 (“configurable FP8”). Optimized for machine learning workloads, D1 (which consists of 354 “training nodes”) is manufactured using a 7nm process and, at just 645 square millimeters, contains 50 billion transistors. “There is no dark silicon, there is no legacy support,” Venkataramanan said of the chip, designed completely by Tesla’s internal engineers. “This is a pure machine learning machine. … This chip [has] GPU-level compute with CPU-level flexibility.”

Tesla put a strong emphasis on modularity across the hardware. D1 is equipped with 4TBps off-chip bandwidth on each of its lateral edges – all four equipped with connectors – allowing it to connect to and scale with other D1 chips without sacrificing speed.

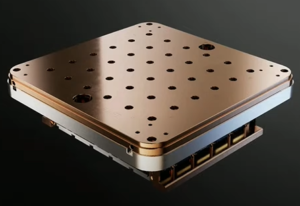

The next step up is Tesla’s “training tile,” a wedge less than a cubic foot in size that contains 25 of the D1 chips. The training tile operates with similar modularity to the chip itself: power and cooling are conducted through the top of the tile, allowing its four lateral edges to be outfitted with high-output connectors designed for maximum bandwidth (a total of 36TB/s of off-tile bandwidth).Tesla put a strong emphasis on modularity across the hardware. D1 is equipped with 4TBps off-chip bandwidth on each of its lateral edges – all four equipped with connectors – allowing it to connect to and scale with other D1 chips without sacrificing speed.

Dojo

“By now, you must have realized our modularity story is pretty strong,” Venkataramanan said. “We just put together some tiles. We just tile together tiles!” And, indeed, it’s modularity all the way down: 354 training nodes make a D1 chip; 25 D1s in a training tile; six training tiles (2×3) in a “training matrix,” which constitutes a tray; two trays in a cabinet; and ten cabinets in what Venkataramanan calls the ExaPOD, a massive machine learning machine with uniform bandwidth. (How many ExaPODs Dojo will contain is unclear.)

With each D1 chip providing 22.6 teraflops of FP32 performance, each training tile will provide 565 teraflops and each cabinet (containing 12 tiles) will provide 6.78 petaflops – meaning that one ExaPOD alone will deliver a maximum theoretical performance of 67.8 FP32 petaflops. (Tesla preferred to offer performance in BFP16 and CFP8, and by those metrics, an ExaPOD will deliver 1.1 exaflops – hence the name.) All of this performance, Venkataramanan said, will be made accessible through a high-performing compiler, which operates automatically without human involvement and requires minimal effort to initiate on the researchers’ part.

“This is what [Dojo] will be,” Venkataramanan said. “It will be the fastest AI training computer.”

Dojo, however, hasn’t arrived just yet – in fact, Venkataramanan said that the first functional training tile had only arrived the previous week. Next up, he said, they were building the cabinets (“pretty soon”).

“And,” he continued, “we’re not done.” Tesla, Venkataramanan said, had a “whole next-generation plan already,” with their eyes set on the next tenfold increase in performance.

This story was originally posted on sister website HPCwire.