AI Edge Chip Vendor Blaize Gets $71M in Series D Funding to Expand Its Edge, Automotive Products

Seven months ago, AI edge chip vendor, Blaize, launched a new no-code AI software application to make it easier for customers to build applications for its AI chip-equipped PCIe cards, modules and devices.

Now, the company has announced the receipt of an additional $71 million in Series D funding from investors including Franklin Templeton, Temasek and DENSO to continue its product development, engineering and sales efforts.

The new funding brings the company’s total investment pot to $155 million, Dinakar Munagala, the CEO and co-founder of Blaize, told EnterpriseAI.

“The world of AI is moving from AI 1.0 to AI 2.0,” which will replace the retrofitting of AI to existing processes to instead offering new platforms and products to make the work easier and better, said Munagala.

“It is just like the whole leap from MS-DOS to Microsoft Windows – that kind of leap is what we are making happen here,” he said. “The [new] money that we raised is actually funding this development and our roadmap of products. We do have products out in the market right now, as of Q4 2020, and this [new money] is to accelerate our growth.”

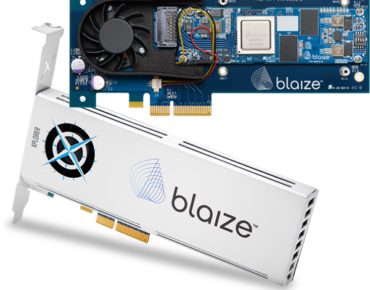

Presently, Blaize offers its initial AI embedded and accelerator products, including the Blaize Pathfinder and Xplorer hardware platforms and the no-code Blaize AI Software Suite, which debuted last December.

The Pathfinder and Xplorer devices are built on the Blaize Graph Streaming Processor (GSP) architecture, which includes 16 GSP cores and 16TOPS of AI inference performance. The devices use seven watts of power and deliver up to 60x better system-level efficiency compared to GPUs or CPUs for edge AI applications, according to Blaize. The Blaize GSP is 100 percent programmable and features advanced capabilities including multi-threading and streaming.

The new funding will support these products and the development of future products for automotive, smart retail, smart city and industrial markets.

AI is still too hard to use for enterprises and their employees, said Munagala.

AI is still too hard to use for enterprises and their employees, said Munagala.

“There are no easy to program tools – it's really difficult,” he said. “You set up a Linux server farm … and then build an AI solution,” which takes huge skills from AI data scientists or machine learning engineers who can make sense of it all and create something of value, he added.

“What our company's vision and mission is, where we are headed with this whole AI 2.0 approach, is that people really need efficient hardware, and very synergistic software and tools,” said Munagala. That’s where its Blaize AI Software Suite comes in, to allow non-technical workers within enterprises to build needed applications that work with Blaize’s hardware products.

“He or she may not be a data scientist or a machine learning engineer, but as long as they know how to use a computer, they are able to build and deploy an AI solution,” he said.

Blaize’s Target Markets

The company, which has about 320 employees, is headquartered in California and has its automotive AI team in the U.K. and its chip engineering and software teams in India.

The company, which was founded in 2010, has been working with automotive OEMs and tier one parts suppliers for several years to integrate its chips and cards for automotive uses, said Munagala. That includes automotive parts company, Denso, while others have not yet been publicly named, he said.

The Blaize chips, modules and cards are built to deliver energy efficient, cost efficient devices with low latency using the company’s custom chip architecture, said Richard Terrill, vice president of strategic business development at Blaize.

“We span the full spectrum and cover the edge to enterprise, what we call the computing continuum,” said Terrill. “We enable our customers to compute where they want to, where it is being defined by their business conditions, privacy, latency, cost, reliability and physical security.”

The current Blaize chips are 14nm process Samsung-built chips, said Terrill. “We have worked with both TSMC and Samsung. TSMC did our initial test chip and Samsung is making our production chips.”

Analysts on Blaize

Alexander Harrowell, senior analyst for advanced computing and AI with Omdia Research, told EnterpriseAI that the company’s latest funding news is evidence of increasing interest in graph-based, dataflow, and Coarse-Grained Reconfigurable Architecture (CGRA) processors as an alternative to GPUs for AI workloads.

“The Series D [round] is a further demonstration of this interest and the return of major VC funds to investing in hardware startups, something they avoided for many years,” said Harrowell. This is being driven by the rocketing size of the models, and the consequent growth in the price, power and area demands of state-of-the-art GPUs – problems that bite especially fiercely in edge, mobile, embedded and IoT applications.”

According to Omdia forecasts in its latest “AI Chipsets for the Edge” report and database, the share of AI hardware revenue accounted for by GPUs at the edge will peak very soon, as commoditization begins to set in and new chip architectures begin to compete, he said.

“Blaize’s technology falls into this broad category – the processor consists of a matrix of logic units and SRAM, although unlike most of the CGRAs, each unit has the full instruction set, and a hardware scheduler allocates elements of the neural network to the units as data comes in,” said Harrowell. “Another important distinction between Blaize and other systems is that they have optimized it for streaming rather than batch-wise inference, something that is valuable for control systems, automotive, and similar use cases although it has costs in classic big data machine learning contexts.”

Another analyst, Karl Freund, the founder and principal analyst for Cambrian AI Research, said he was pleasantly surprised by Blaize’s latest funding news.

“Blaize had done an excellent job of early customer nurturing, but sort of went dark for the last year,” he said. “Rumors abounded that their tech just did not cut it, but this raise breathes new life into what was a vibrant story. AI at the edge will become a crowded space, where customers will have many alternatives and can demand lower prices. Deep customer engagement such as what we had seen might help companies such as Blaize retain clients at higher margins.”

Blaize Product Details

The Blaize Pathfinder P1600 embedded Systems on Module (SOM) device brings programmability and efficiency benefits from the GSP to embedded edge AI applications deployed at the sensor edge or at the network edge, according to the company. Host processors are not needed with the P1600. Users can plug them in and use them immediately.

Two different PCIe-based Blaize Xplorer Accelerator devices are built to accelerate AI applications at the edge of the enterprise via an unused PCIe slot in a host server or appliance. The X1600E is a small form factor accelerator platform for small and power-constrained environments such as convenience stores or industrial sites. It can be added to accelerate AI apps in industrial PCs or as a rack of cards in a small 1U server.

The more powerful X1600P is a standard PCIe-based accelerator in a half-height, half-width form factor. The X1600P can replace a power-hungry desktop GPU in edge servers and provide anywhere from 16 to 64TOPS of AI inference performance with low power requirements.

The Blaize AI Software Suite includes two components – the Picasso software development kit (SDK) and AI Studio, a 100 percent code-free visual interface. In addition to being usable by non-developers, the suite also offers tools for traditional software developers. The suite is built on open standards. Both components also use Blaize Netdeploy, which uses edge-aware algorithms to provide improved accuracy and performance for edge deployments.

Customer samples of both product lines are available now with full production expected starting in Q4 2020, according to the company. The Blaize Xplorer X1600E is available for $299 in volume quantity, the Pathfinder P1600 SOM is available in industrial grade for $399 in volume quantity, and the Blaize Xplorer X1600P is available for $999 in volume quantity.