Nvidia Probes Accelerators, Photons, GPU Scaling

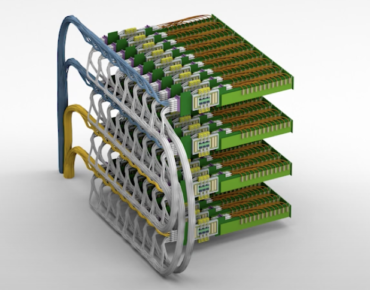

Source: Nvidia

Nvidia spotlighted an AI inference accelerator, emerging optical interconnects and a new programming framework designed to scale GPU performance during this week’s GTC China virtual event.

In a keynote, Bill Dally, Nvidia’s chief scientist, said the MAGNet inference accelerator achieved 100 tera-operations per watt in a simulation. Nvidia is also working with university researchers on GPU optical interconnects as NVLink and current interconnect schemes hit their peak. Meanwhile, a programming language dubbed Legate allows code written for a single GPU to scale up to HPC capacity.

Dally noted the trend toward dedicated AI accelerators based on the assumption those CPUs are more efficient than general purpose GPUs. Nvidia counters that the overhead and payload advantages of accelerators are outweighed by GPU programmability that allows platform developers to track rapid advances in neural networks

To exploit new models and training methods, “you need a machine which is very programmable,” argued Dally. The GPU maker points to a more than 300-fold performance gain in single-chip inference since 2012, an eight-year span from its Kepler platform to its latest Ampere A100 GPU. Those gains mean inference performance is currently doubling each year.

Nvidia attributes performance improvements to advances in tensor cores used for AI-oriented matrix calculations, along with improved circuit design and chip architectures. While Ampere is one of the largest 7-nanometer devices with 54 billion transistors, “Very little of [the inference gain] is due to process technology,” Dally added. “Most of this is from better architecture.”

“Hardware by itself doesn’t solve the world’s computing problems,” Dally continued. “It takes software to focus this tremendous computing power on the demanding problems, so we’ve put a tremendous amount of effort into a software suite to do this.”

Nvidia is attempting to exploit those architecture advantages with new software packages. Among them is MAGnet, which orchestrates information flow devices to reduce energy-hogging data movement.

In developing a series of prototype chips for inference applications, Nvidia researchers learned that much of the power consumed by inference chips goes toward moving data rather than for “payload” calculations.

That reality stimulated development of MAGnet. The software “allows us to explore different organizations of deep learning accelerators and different data flows—different schedules for moving data from different parts of memory to different processing elements to carry out the computation,” Dally said.

MAGnet was used to simulate deep learning accelerators that feed data from local memory. Those simulations revealed that inference calculations accounted for only about one-third of power consumption; data movement accounted for most of the rest.

Applying those findings, Nvidia researchers added a storage layer that shifted 60 percent of energy consumption to “payload” computations. “We are now achieving 100 tera-ops per watt for inference,” Dally claimed, adding the techniques will be incorporated into future versions of Nvidia’s deep learning accelerators.

Meanwhile, its Mellanox unit is expected to double the bandwidth of its NVLink and NVSwitch GPU interconnects. “Beyond that, the waters are very murky,” the Nvidia research chief acknowledged.

Working with researchers at Columbia University, the graphics vendor is exploring core telecommunication network technologies like dense wavelength division multiplexing. DWDM is a fiber-optic transmission technique that funnels different wavelength signals into a single fiber.

Nvidia predicts a photonic interconnect could help break networking bottlenecks by transmitting multiple terabits per second into links that fit on the side of a chip, providing a 10-fold density increase over current interconnects.

Meanwhile, researchers are also focusing on scaling GPUs to address more AI and inference applications. The Legate package allows programmers to run a Python program, for example, on platforms spanning Jetson Nano to its deep learning GPU clusters.

After tweaking Python code to import Legate, a library is loaded and other programming steps are automated. Compared to an alternative task scheduler, Nvidia claimed Legate maintained performance while managing large GPU clusters. According to Dally, “Legate is able to do a better job of parallelizing the task and keeping all the GPUs busy.”

Related

George Leopold has written about science and technology for more than 30 years, focusing on electronics and aerospace technology. He previously served as executive editor of Electronic Engineering Times. Leopold is the author of "Calculated Risk: The Supersonic Life and Times of Gus Grissom" (Purdue University Press, 2016).