AWS Delivering Deeper Machine Learning Tools and Services to Customers

AWS re:Invent 2020 – Simplifying the use of machine learning and deep learning processes for enterprise, manufacturing and industrial customers is the goal of a series of new ML tools and services unveiled this week by Amazon Web Services at the company’s annual re:Invent tech conference.

The new tools include Amazon Neptune ML, which gives application developers access to ML that is purpose-built for graph data; Amazon RedShift ML, which enables algorithms to run on Amazon RedShift data without manual selection, building and training of ML models; and Amazon Lookout for Metrics, which uses ML to detect anomalies in metrics to protect the health of businesses.

Also unveiled were additional details about nine new ML capabilities within Amazon SageMaker, which were first discussed last week during the first week of AWS re:Invent 2020. The event continues to be held virtually due to the ongoing COVID-19 pandemic.

“Machine learning is one of the most disruptive technologies we will encounter in our generation,” Swami Sivasubramanian, the vice president of AI for AWS, said in the company’s first-ever ML keynote at the event on Tuesday (Dec. 8). “More than 100,000 customers use AWS for machine learning today, from creating a more personalized customer experience to developing personalized pharmaceuticals. These tools are no longer a niche investment. Our customers are applying machine learning to the core of their businesses.”

Many large enterprises are already using ML from AWS, said Sivasubramanian, including Domino's Pizza, which is using ML for predictive ordering; pharmaceutical company Roche, which is using Amazon SageMaker to accelerate the delivery of treatments and tailor medical experiences; and Cabbage, an American Express company, which is using ML for loan application processes.

“As you can see, our customers are innovating virtually in every industry,” he said.

That’s Where New Tools Come In

With each new idea for using ML in existing business processes, customers begin to see new needs for the technology, said Sivasubramanian. Those requests have been driving some of the new innovations and ML tools being developed by AWS, he said.

That’s how Amazon Neptune ML was inspired, based on the existing Amazon Neptune managed graph database service, he said.

Graph databases are often used to store complex relationships between data and graph models, and customers told AWS that they wanted to apply machine learning to applications that use graph data to build better recommendation engines and generate more accurate predictions for fraud detection, according to Sivasubramanian. But those customers lacked the time and skills to make that happen.

Graph databases are often used to store complex relationships between data and graph models, and customers told AWS that they wanted to apply machine learning to applications that use graph data to build better recommendation engines and generate more accurate predictions for fraud detection, according to Sivasubramanian. But those customers lacked the time and skills to make that happen.

In response, AWS unveiled Amazon Neptune ML, which is built to enable easy, fast and accurate predictions for graph applications, he said. “Neptune ML does the hard work for you by selecting the graph data needed for training, it automatically chooses the best ML model for selected data, exposing ML capabilities via simple graph queries and providing templates to allow developers to customize ML models for advanced scenarios. And with machine learning algorithms that are purpose-built for graph data use SageMaker and the deep graph library, developers can improve prediction accuracy by over 50% compared to that of traditional ml techniques.”

Amazon Neptune ML is now generally available.

Amazon RedShift ML

AWS already has ML tools for customers who want to use pre-trained models with Amazon's Aurora relational database and Amazon Athena interactive query services, said Sivasubramanian, but some customers don’t want to select training models at all. That’s when AWS engineers began thinking about existing Amazon RedShift customers who are already processing exabytes of data to power analytics workloads, he said.

“And customers want their analysts to leverage machine learning with that data in RedShift without having to deal with having the skills or the time to use machine learning,” said Sivasubramanian. “So, we asked ourselves, how can we make this easy for our RedShift customers?”

That’s where the new Amazon RedShift ML comes in, as well as the integration of Amazon SageMaker Autopilot into Amazon RedShift, to make it easy for data warehouse users to apply machine learning on that data, he said. It is in a preview version for now.

“Now, in addition to making ML more accessible to data analysts, it turns out that combining machine learning with certain types of data models can also lead to better predictions,” he said.

Amazon Lookout for Metrics

Another area where customers have been asking AWS for help with using ML is in anomaly detection, said Sivasubramanian. “It turns out that machine learning is really good at identifying subtle signals against a lot of noisy data. And that is data across a broad spectrum of industries, where machine learning can be applied to help understand and catch anomalies before it's too late.”

Organizations of all sizes use data to monitor trends and changes in their business metrics, from dips in product sales or sudden increases in qualified sales leads, he said. But traditional methods for detecting these anomalies, such as fixed thresholds, are error-prone, and can lead to false alarms and detected anomalies and results that are not always actionable, he added.

To solve this problem for customers, AWS has unveiled Amazon Lookout for Metrics, which uses machine learning to detect anomalies in virtually any time series-driven business and operational metrics, such as revenue performance, purchase transactions, and customer acquisition and retention rates, said Sivasubramanian. It is also in a preview version for now.

“Lookout for Metrics detects unexpected changes in your metrics with high accuracy by applying the right algorithm to the right data,” he said. Using 25 built-in connectors for data and alerts, it identifies anomalies and also helps find their root causes so customers can take quick action to remediate issues or react to opportunities.

Other Improvements in ML – Faster Training Models

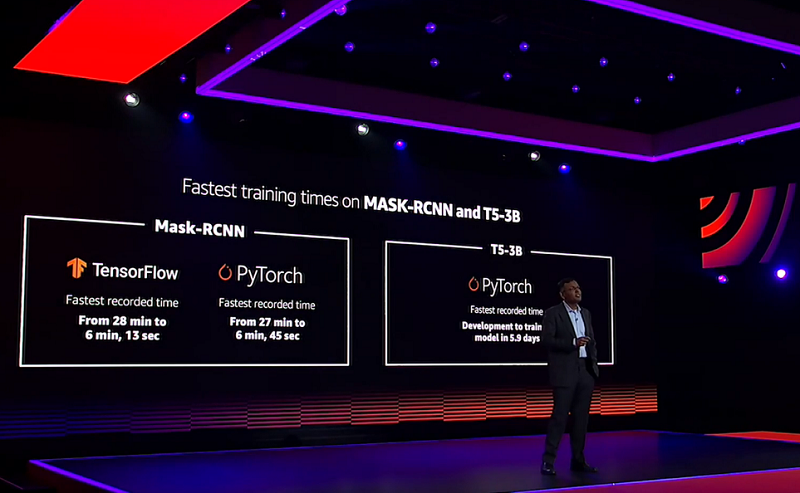

AWS customers also tell the company that they need more than just the best hardware to train large models, Sivasubramanian said. One example is in the area of ML training models. Two popular deep learning training models, Mask R-CNN and T5, are commonly used for distributed training, but there can be challenges, he added.

“To speed up training times for models with large training data sets, like Mask R-CNN, you can split your data sets across multiple GPUs, commonly known as data parallelism,” said Sivasubramanian. “When training large models like T5, which are too big for even the biggest, most powerful GPUs, you can write code displayed across multiple GPUs, commonly known as model parallelism. But doing this is difficult and requires a high level of expertise and experimentation, which can take weeks even for expert practitioners.”

To handle this task for customers, AWS added new capabilities to Amazon SageMaker using only a few lines of additional code in PyTorch and TensorFlow training scripts so it can automatically apply data parallelism or model parallelism, allowing customers to train their models faster, said Sivasubramanian.

To handle this task for customers, AWS added new capabilities to Amazon SageMaker using only a few lines of additional code in PyTorch and TensorFlow training scripts so it can automatically apply data parallelism or model parallelism, allowing customers to train their models faster, said Sivasubramanian.

“Data parallelism with Amazon SageMaker allows you to train 40% faster,” he said. “Model parallelism, which used to take dedicated research labs weeks of effort and hand-tuning training code, now takes only a few hours.”

Also announced were nine new Amazon SageMaker tools that were previously mentioned last week at AWS re:Invent 2020.

All the new AWS products are aimed at improving the capabilities that developers have in their hands to further drive ML services inside their companies, said Sivasubramanian.

“Deep learning is still the domain of expert practitioners, and is simply too hard for most people to do,” he said. “For any technology to have significant impact, builders need to be given the shortest possible path to success. Having the tools for your builders to be able to satisfy and explore their ideas quickly, without barriers is a significant accelerator.”

On Monday (Dec. 7) at the re:Invent 2020 event, AWS announced that it is moving to expand industrial AI use with five new industry-focused AI tools that can watch over manufacturing plants 24/7 to detect problems on assembly lines and other systems, while also predicting needed maintenance tasks. The new AI tools are machine learning services that can help industrial and manufacturing customers bring machine intelligence into their production processes for improvements in operational efficiency, quality control, security, and workplace safety, according to AWS. Using machine learning, sensor analysis, and computer vision capabilities, the tools aim to make it easier for manufacturing and industrial operations to address common technical challenges by using cloud-to-edge industrial machine learning services.