AI Struggles to Outperform Diagnosticians

Among the most promising medical applications for artificial intelligence is image recognition as a diagnostic tool. AI algorithms are approaching the capabilities of radiologists, and groups like the American College of Radiology have formed data science units designed to train, validate and deploy algorithms in clinical settings.

The gold standard for medical diagnostics, however, is developing AI algorithms that can outperform human radiologists. “The level of accuracy of an algorithm is its number one selling point,” concludes IDTechEx.

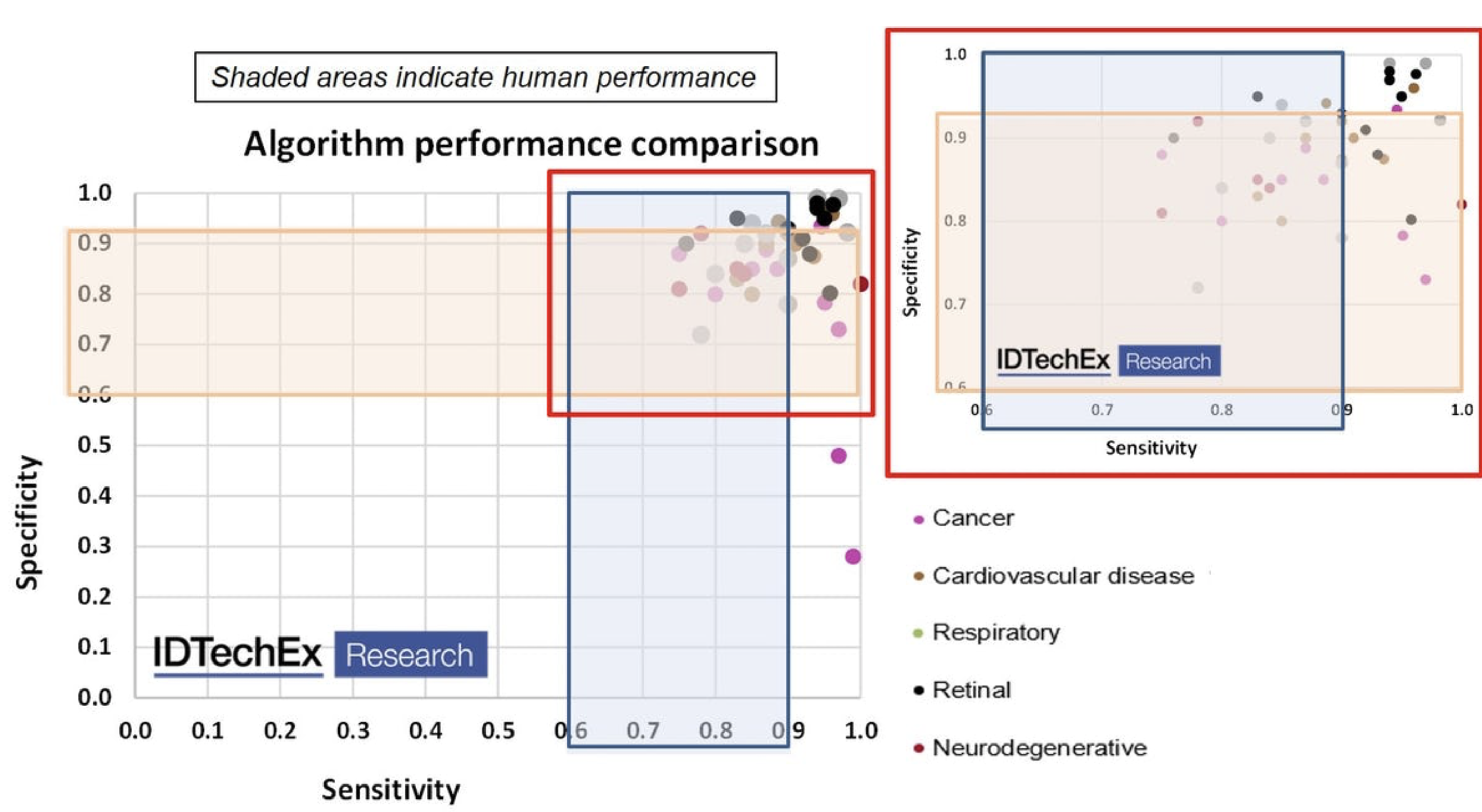

The market tracker gathered performance metrics for more than 35 AI algorithms, finding that most are equal to human image scanners for detecting diseases. But good enough isn’t good enough for clinical use, the analyst added.

“Ultimately, AI must be more reliable and more accurate than even the most highly trained experts in order to gain credibility as a decision support tool.”

The survey of algorithms used for image recognition in medical diagnostics found that most were in the range of human performance for detecting negative cases. Specificity also was in the range of human radiologists. A few algorithms demonstrated “super-human sensitivity and specificity,” IDTechEx reported this week.

“AI has the potential to outperform humans at detecting disease from medical images, but most AI companies have not yet been able to achieve this,” the market analyst added.

Groups like the American College of Radiologist’s Data Science Institute have formed AI labs to encourage clinicians to use patient data in training Al algorithms for specific medical imaging applications. Thus far, those efforts confirm the IDTechX findings: “Many of the algorithms developed thus far have proven brittle in actual clinical use when tried at different institutions,” the radiology group recently reported.

Hence, the college is working with AI vendors, most notably graphics processing specialist Nvidia (NASDAQ: NVDA), to develop new AI-based tools for medical imaging. Nvidia announced in December 2019 it was expanding its Clara AI platform aimed at radiologists to include data protections that would allow clinicians to collaborate while ensuring the privacy of patient data.

Those initiatives meet a pressing need, IDTechEx said. Boosting the performance of medical imaging algorithms requires diverse training sets that would broaden radiology applications. Meanwhile, diagnostic specificity would be improved by adding more “negative cases” within training data, the analyst added.

Data quality also remains an issue in developing deep learning algorithms for radiology. Hence, there is a push for using higher-resolution imagery to boost algorithmic performance.

All three require scale, which observers said would provide access to more data and algorithmic improvements that would eventually outperform human radiologists. In particular, objective deep-learning algorithms could help overcome human “confirmation bias” when scanning imagery, experts note.

Still, the authors of the medical AI survey note that current algorithm development is mostly stalled. “The days of leaps in performance of image recognition are over, barring radical innovation in algorithm techniques,” they note.

“Gains in precision, recall and other metrics will henceforth be incremental,” the authors added. The emphasis has shifted to “showing that the AI is applicable to as wide a population set—gender, age, ethnicity, tissue density, etc.—as possible.”

Related

George Leopold has written about science and technology for more than 30 years, focusing on electronics and aerospace technology. He previously served as executive editor of Electronic Engineering Times. Leopold is the author of "Calculated Risk: The Supersonic Life and Times of Gus Grissom" (Purdue University Press, 2016).