Photonics Processor Aimed at AI Inference

Source: Lightmatter

Silicon photonics is exhibiting greater innovation as requirements grow to enable faster, lower-power chip interconnects for traditionally power-hungry applications like AI inferencing.

With that in mind, scientists at Massachusetts Institute of Technology launched a startup in 2017 called Lightmatter Inc. to develop silicon photonic processors. Another goal was leveraging optical computing to “decouple” AI processing from Moore’s law scaling that according to the company founders literally produces more heat than light.

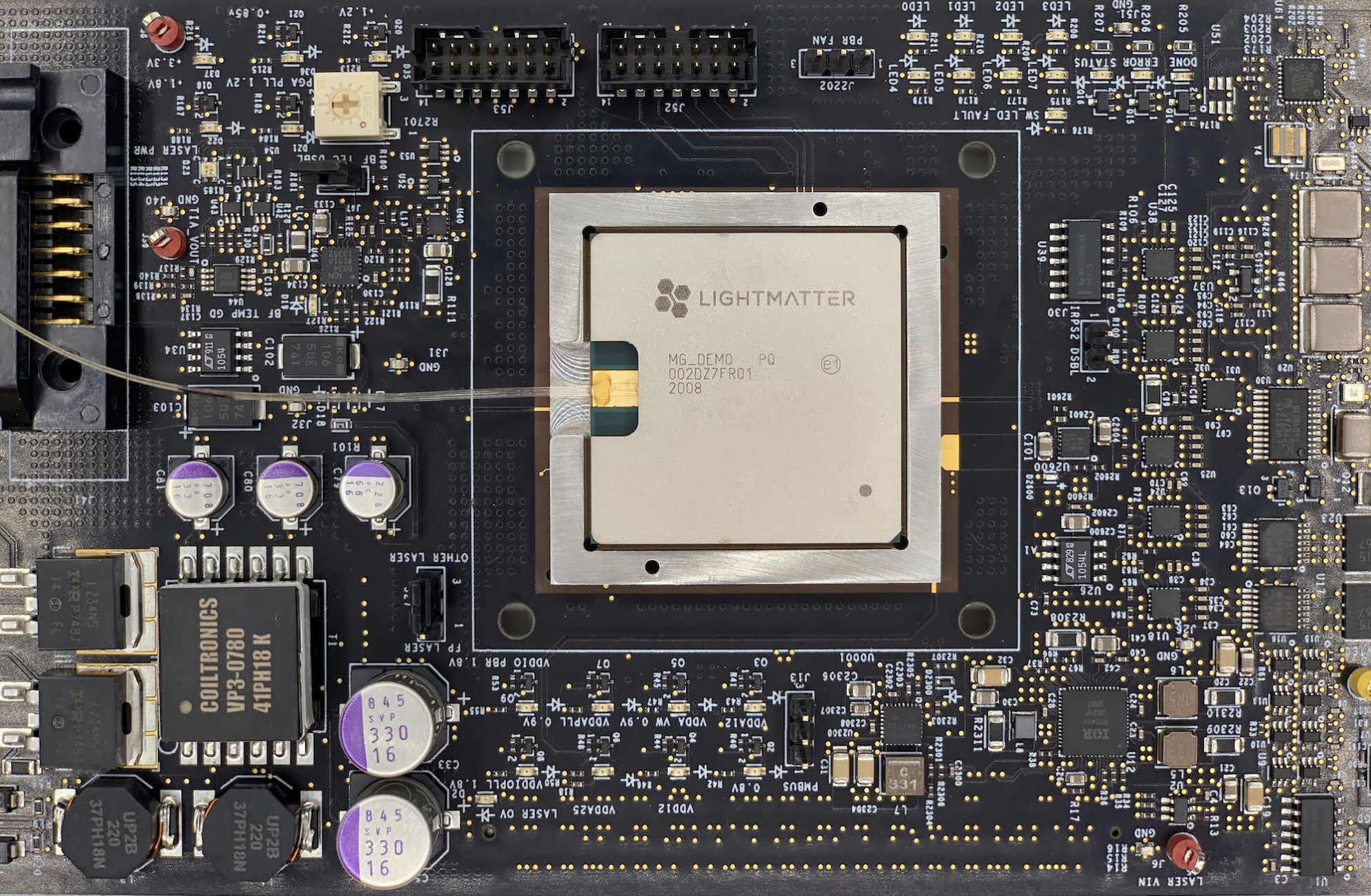

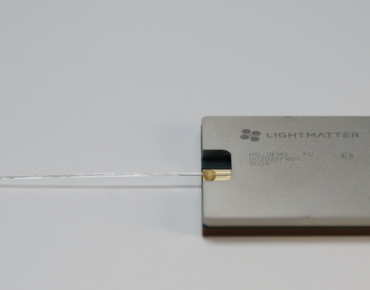

Lightmatter announced an AI photonic “test” chip” during this week’s Hot Chips conference positioned as an AI inference accelerator using light to process and transport data. The 3D module incorporates a 12- and 90-nm ASIC, the latter supporting photonics processing steps such as laser monitoring and light distribution.

The design also incorporates a device called a Mach Zehnder interferometer used to encode data with light, converting an electrical signal into a change in the “brightness” of light traveling between input and output waveguides. The architecture is akin to different colored beams passing through a prism, allowing processors to calculate on different wavelengths of light simultaneously.

The upshot is that less energy is required to move data, providing a power efficient alternative to traditional processing and interconnects used for AI inference workloads. Those traditional methods are forecast to account for more than 8 percent of global energy usage by 2030, according to Energy Department projections cited by the photonics startup.

Along with harnessing the speed of light, the chip developer is pitching is photonics chip as an energy saving alternative to CPUs, GPUs and ever-more datacenters, which the company characterizes as a “dead end path along the road of computational progress.”

Lightmatter claims it photonics processor can do for free what would otherwise require tens of watts for “passive data transport” using CPUs or GPUs. “Lightmatter’s optical processors are dramatically faster and more energy efficient than traditional processors,” said Nicholas Harris, Lightmatters’ founder and CEO.

Moreover, the three-year-old chip startup notes the growth rate for AI workloads measured in petaflops per day has already exceeded Moore’s Law scaling by a factor of five. The alternative to photonics processing would be “cover[ing] the planet with datacenters to power AI,” company executives said.

Boston-based Lightmatter said its design uses a 3D-stacked chip package containing more than 1 billion FinFET transistors along with photonic arithmetic units and data converters. The photonic processor runs PyTorch, TensorFlow and other standard machine learning frameworks to generate AI algorithms.

Demand for silicon photonics technology is forecast to grow, with some regions expanding at a 25-percent annual clip as optical transmission technologies also make their way into datacenters and sensor deployments. Industry tracker Global Market Insights predicts the silicon photonics sector also will be driven by 5G wireless deployments that will require higher network bandwidth.

Along with startups like Lightmatter, other silicon photonics producers include Broadcom, Cisco Systems, GlobalFoundries, Hamamatsu Photonics, IBM, Intel Corp., Juniper Networks, NeoPhotonics Corp. and STMicroelectronics.

Other photonics startups have taken different approaches. Ayer Labs introduced optical chiplet technology at last year’s Hot Chips that combines optics with Intel Stratix 10 FPGAs on the same chip using standard CMOS fabrication.

Intel (NASDAQ: INTC) demonstrated a 400-gigabit Ethernet photonics transceiver last year. Other optical devices in development have approached 800 Gb/s throughput.

The startup said it expects to release a production version of its AI photonic processor by Fall 2021.

Related

George Leopold has written about science and technology for more than 30 years, focusing on electronics and aerospace technology. He previously served as executive editor of Electronic Engineering Times. Leopold is the author of "Calculated Risk: The Supersonic Life and Times of Gus Grissom" (Purdue University Press, 2016).