HPE Pins AI Strategy on Pay-as-You-Go Consumption Model

HPE, like all the system vendors, has big plans to sell massive amounts of compute capability to companies to run machine learning and deep learning workloads that help transform their businesses. But the tech firm is taking a slightly different track toward AI than its brethren, one that revolves almost entirely around the service delivery model.

“In the next three years, HPE will be a consumption-driven company and everything we deliver to you will be available as a service,” HPE CEO Antonio Neri said during a keynote this week at HPE Discover. “More and more of you tell me that you don’t want to spend resources managing your infrastructure, whether on the edge, in the cloud, or in the data center, whether it’s cloud-native or legacy environments. You just want to focus on your application data and your business outcomes.”

Everything for HPE means everything, apparently. Even a massive HPE Apollo server loaded to the brim with RAM and GPU cards – the sort of machine designed to train massive deep learning models from petabytes of source material — will be sold to customers as a service. From enterprise-class storage and composable infrastructure to Cray HPC hardware and 5G edge solutions, every single thing that HPE sells will be available through the pay-as-you-go consumption model.

Call it the service-ization of HPE.

Everything that HPE makes — even servers and storage — will be sold through the as-a-service delivery method by 2022, says CEO Antonio Neri at HPE Discover.

Clearly, HPE’s pivot to services is designed to blunt the ongoing advance of public clouds. Amazon Web Services, the biggest of the clouds, is growing at close to a 50% annual rate at the moment as it attracts thousands of customers, adding billions to its bottom line through a veritable smorgasbord of enterprise IT and AI offerings. Microsoft Azure and Google Cloud, although smaller than AWS in terms of revenue and customers, are growing even faster.

HPE is gambling that IT buyers will tire of public clouds when they discover that putting all their data and application eggs in one cloud basket restricts their ability to move agilely with respect to their digital transformation strategies. “We know the world is hybrid and the best experience will win,” Neri said. “You want choice and flexibility to place your data in the place that makes the most sense. Our competitors want to lock you into one flavor of cloud…They are all what I call walled gardens, each one very unique.”

A key cog in HPE’s hybrid wheel of data-driven digital transformation will be BlueData, the Silicon Valley big data startup that it acquired last year. BlueData develops software that essentially containerizes big data environments. Once exposed as a Docker container managed via Kubernetes, BlueData’s software allows customers to move that application wherever they want, whether it’s on-premise infrastructure, the public cloud, or a combination of the two.

“We enable hybrid,” Jason Schroedl, vice president of marketing for BlueData, told EnterpriseAI's sister site Datanami at HPE Discover this week. “BlueData will run on any infrastructure: any third-party hardware, storage or servers, any public cloud.

BlueData started out life helping companies be more agile with their Hadoop investments. When Spark and Kafka became popular ways of harnessing big data, the company was ready with support for those frameworks. Now the company is seeing more interest in creating agile deployment scenarios around machine learning and deep learning frameworks, such as H2O.ai, TensorFlow, PyTorch, and MXnet.

“That’s one of things we address is distributed TensorFlow. Distributed TensorFlow is more challenging,” Schroedl said. “It’s easy enough for one data scientist to deploy TensorFlow on Kubernetes or containers running on a workstation under the desk. But when you get into a larger scale distributed environment, that’s where we can really help.”

While BlueData will support containerized deployment of big data and AI frameworks on any servers or clouds, the organizations is pursuing a “better together” strategy with HPE, which bought BlueData in November. That includes putting together reference frameworks atop HPE servers that can address common server requirements.

“We’re going to market with the server team at HPE in a joint offering with BlueData running on GPU-enabled Apollo servers, the Apollo 6500, along with GPU-enable ProLiant DL servers,” Schroedl said. “So that customer can take either their investment with GPU-enabled HPE servers…and be able to make those available to multiple different data science teams and allocate right-size GPU resources in containers, for those different projects.”

The company also has a partnership with H2O.ai to streamline the delivery of the proprietary Driverless AI offering on containers. HPE also works with Dataiku and other data science platform vendors to ensure their software can be managed via BlueData to streamline usage and deployment for HPE customers. (HPE actively avoids being a software company, but BlueData’s infrastructure-enablement play for big data and AI workloads evidently was too good to pass up.)

BlueData will be called on to help HPE customers stay on top of the constantly shifting sands of the enterprise AI market. “We can easily containerize any of these new tools that are available and continue to build out the app store of container images,” Schroedl said. “We’ll be working with partners like Nvidia, which has the NGC container registry. We’ll make that available within our app store.”

Later this year, BlueData will become available in GreenLake, which is HPE’s offering for as-you-go consumption of compute. That will elevate the company’s strategy for giving customers access to AI and big data number-crunching as a service. This is part and parcel of HPE’s strategy to make AI a service-enabled offering, said Patrick Osborne, vice president and general manager of HPE’s big data business.

“What we’re trying to do from the vision perspective in the big data analytics group,” Osborne told Datanami, “is we want to provide an experience for the customer that’s very similar to what they get from top of cloud, like PaaS [platform as a service] in a consumption layer, so they have the tools, they have the services they need, and they can spin those up, whether it’s on prem or off prem.”

While HPE is allergic to software, it’s very fond of solutions that bring everything together for customers (who presumably are willing to pay a premium for having somebody else do the hard work of stitching everything together). This is evident in its GPU-as-a-service offering, which it unveiled last month, as well as the “everything is services” mantra that was repeated continuously at HPE Discover this week.

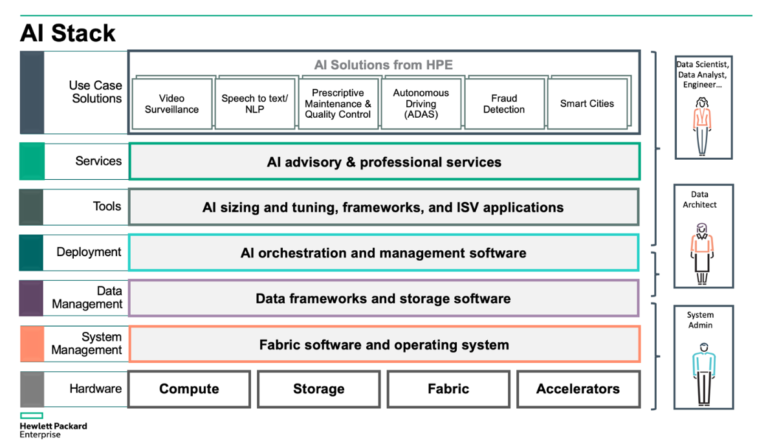

Eventually, the company plans to offer a variety of tailored AI solutions to customers, presumably with help from business partners. Instead of combining the hardware, software, and human know-now to develop one-off prescriptive maintenance, fraud detection, smart cities, and video surveillance solutions, HPE customers will be able to get access to the solution in a pay-by-the-sip consumption model.

“I want to be able to provide infrastructure, software, and services in a consumption model so people can do analytics as a service, or AI as a service, or GPU as a service, within their organization,” Osborne said. “I want to give them the fundamental building blocks to be able to do that for their data scientists and engineers. That’s what some of these big customers [like GlaxoSmithKline, GM Financial, and Barclay’s] are doing with BlueData.”

As the world’s businesses shift from a data-accumulation mindset into a data-exploitation phase, those who can innovate the quickest have an advantage. HPE clearly hopes to be an independent supplier of end-to-end big data and AI solutions that companies turn to when they’re ready to take their big data and AI strategies to the next level. The company hopes to make this as easy to consume as possible with GreenLake and pay-as-you-go payment models, with professional services from HPE Pointnext filling in the gaps that inevitably arise.

“Enterprises who are able to instantly extract outcomes from all their data will be simply unstoppable,” HPE CEO Neri said. “That’s why our strategy is to accelerate your enterprise with data centric and cloud-enabled solutions that are optimized and delivered as a service so you can unlock the value from all your data, wherever it may live.”

This article first appeared on sister site Datanami.

Related

Alex Woodie has written about IT as a technology journalist for more than a decade. He brings extensive experience from the IBM midrange marketplace, including topics such as servers, ERP applications, programming, databases, security, high availability, storage, business intelligence, cloud, and mobile enablement. He resides in the San Diego area.