Alexa Gets a Filter

Amazon’s ubiquitous voice-controlled Alexa home assistant has been re-trained by researchers using Nvidia GPUs designed for AI training and inference. The overhaul resulted in what company and university researchers report is a statistically significant improvement in Alexa’s speech recognition model.

More significantly for consumers, a new approach that helps the device ignore speech not intended as a request promises to keep Alexa on stand-by mode and not “wake up” accidently.

In the category of unintended consequences, Alexa can be unintentionally activated by, for example, a visit from a friend named “Alexa.” Researchers from Amazon (NASDAQ: AMZN) and Johns Hopkins University’s Center for Language and Speech Processing sought a way for Alexa to ignore background noise and “interfering speech” that could mistakenly rouse the home assistant to action.

Their model, dubbed “End-to-End Anchored Speech Recognition,” or ASR, focuses on the “wake-up” word in an audio stream, which the researchers refer to as the “anchored segment.”

Using Nvidia’s (NASDAQ: NVDA) V100 GPUs and a speech recognition tool kit based on TensorFlow, they trained their algorithm using 1,200 hours of English-language data from Amazon Echo, the smart speaker that connects with Alexa. The resulting neural network was trained to recognize the wake word “Alexa” and to ignore “interfering” speech or background noise.

The researchers proposed two models for their anchored speech recognition framework focusing on either speech directed specifically at Alexa or entirely from interfering speech. The two types, or frames, were not mixed.

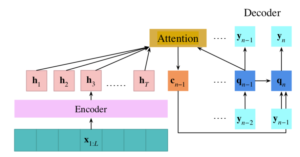

In developing their ASR system, both speech types were processed using a pair of “attention-based” encoder-decoder models (see diagram). The attention mechanism provided a means of decoding only desired speech within an audio stream.

The result was a relative reduction of 15 percent in the word error rate using Alexa live data with background noise such as interfering speech. The researchers also reported little or no degradation of so-called “clean” speech during the filtering process.

“The challenge of this task is to learn a speaker representation from a short segment corresponding to the anchor word,” the investigators said. “We implemented our technique using two different neural-network architectures. Both were variations of a sequence-to-sequence encoder-decoder network with an attention mechanism” that accounts for speaker information and the state of the decoder, they added.

Related

George Leopold has written about science and technology for more than 30 years, focusing on electronics and aerospace technology. He previously served as executive editor of Electronic Engineering Times. Leopold is the author of "Calculated Risk: The Supersonic Life and Times of Gus Grissom" (Purdue University Press, 2016).