The Adaptive FPGA Platform that Might Carry AI – and Everything Else – Forward

Xilinx's Victor Peng at Hot Chips

Change is a constant, the pace of change accelerates, adapt to change or wither....

Right, sorry, these are givens. But still, these days, it bears repeating because creative destruction and innovation adoption in high end data center computing seem to be happening in real time, as though we can see historical progression roll by before our eyes, like a time-lapsed video showing 24 hours of weather in 15 seconds.

Not three years have passed since the SC15 conference, but two memories stand out that highlight how much the industry has changed in that 33-month span.

One is of a senior Intel executive delivering what can only be called (we hate to be cruel, but…) a cringe-making keynote declaring Moore’s Law to be alive, kicking and charging ahead.

Yeah, you go, Moore’s Law!

The other: in an interview with a senior manager of a leading server vendor, the refusal to countenance the possibility of chips other than Intel’s in the company’s data center servers. Queries about straying off the Intel ecosystem were met with a shake of the head even before the question could be completed.

Talk to the hand, pal. Intel all the way.

Today, all of that seems to be from an earlier, simpler age. Today, it’s all about creating a composable, mix-and-match infrastructure (see Dell EMC’s new servers; see Gen-Z and OpenCAPI and other new fabric consortia), vendors are working feverishly to allow IT managers to pick and choose among a range of disaggregated technologies and architectures needed for a given problem that, increasingly, involves some amount of machine learning and deep neural networks.

This is the vision behind Xilinx’s Everest adaptive compute acceleration platform (ACAP), announced earlier this year and for which the company rolled out a bevy of technology hype lingo, calling it a revolutionary new product category, a breakthrough, a technology disruption. Marketing hype, yes, but we also admit that Everest – assuming, of course, that it works – has a shot at effecting profound change in data center computing, starting with the hyperscalers. Sorry to gush, but conceptually, there’s no denying ACAP is worthy of our sitting up and taking notice.

One industry watcher who’s impressed is Patrick Moorhead, founder, Moor Insights & Strategy, who (at the time of the announcement) said “this is what the future of computing looks like.”

"We are talking about the ability to do genomic sequencing in a matter of a couple of minutes, versus a couple of days,” he said. “We are talking about data centers being able to program their servers to change workloads depending upon compute demands, like video transcoding, during the day and then image recognition at night. This is significant."

Xilinx, of course, is the FPGA market leader with about a 60 percent share, with Intel Altera-based FPGAs garnering most of the rest. But Everest ACAP goes beyond FPGAs. Combined with the emergence of domain-specific architectures (DSAs), it’s an integrated, multi-core, heterogeneous compute platform-on-a-chip that can be changed dynamically, during operation, at the hardware level.

At the Hot Chips conference in Cupertino last week, Victor Peng, Xilinx CEO and president for six months after 10 years at the company and a 40-year industry veteran, expanded on the ACAP idea under the rubric of “Adaptable Intelligence,” placing it into historical perspective and discussing workload use cases for which ACAP is suited.

In a general sense, ACAP is built for intelligent applications driven by increasingly powerful machine learning algorithms that continually get better at their assigned tasks and are freely distributed throughout the infrastructure. And while AI (broadly defined) is still a toddler, the ACAP vision is to provide a platform upon which AI can evolve to higher levels of maturity.

“We’ve been talking for decades about how the world is going to become more connected, about the idea of pervasive intelligence,” Peng said, “and the exciting thing is it’s really happening. Intelligence in the cloud, at the edge and at the end points.”

In the context of autonomous vehicles, he said, the car is at the edge, for certain computing needs it can communicate with the cloud and upload data for longer term storage for AI training and other purposes, while the car’s various sensors, cameras, LIDAR and RADAR capabilities, and so forth, are the end points. AI drives the car, and most of the processing has to be done in real time, so the car becomes, as widely noted, a data center on wheels.

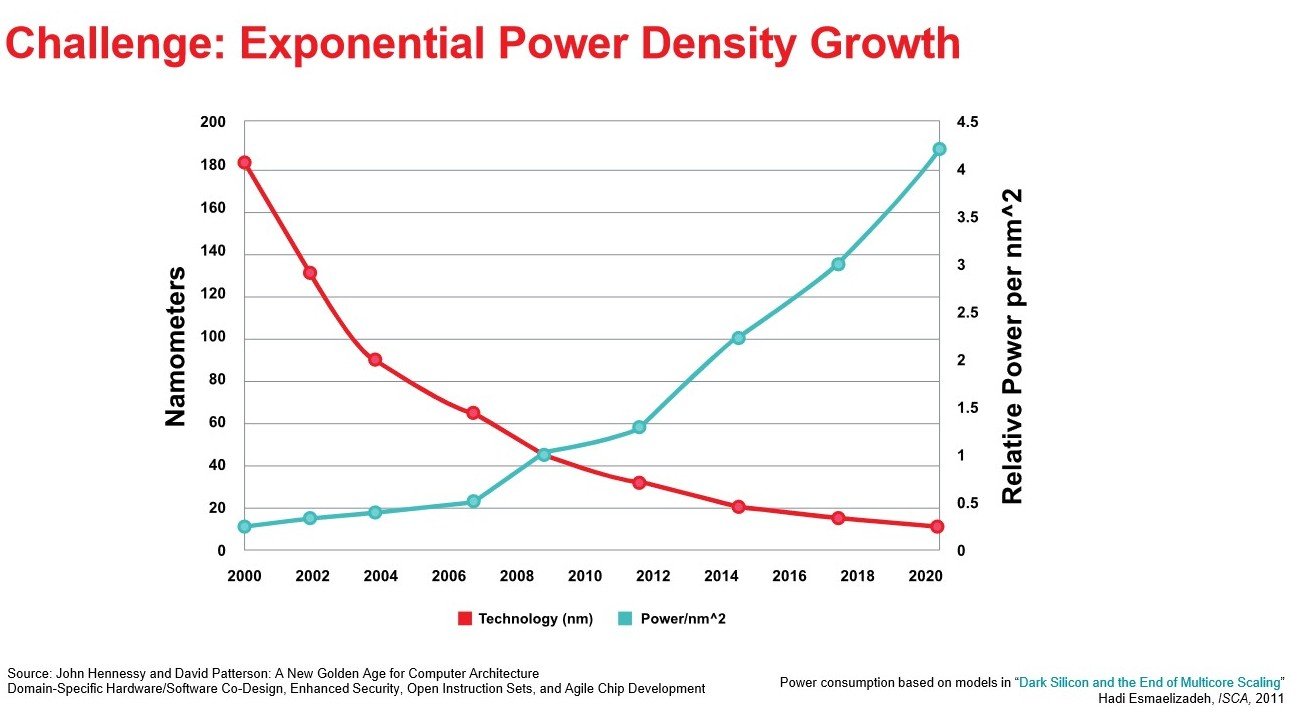

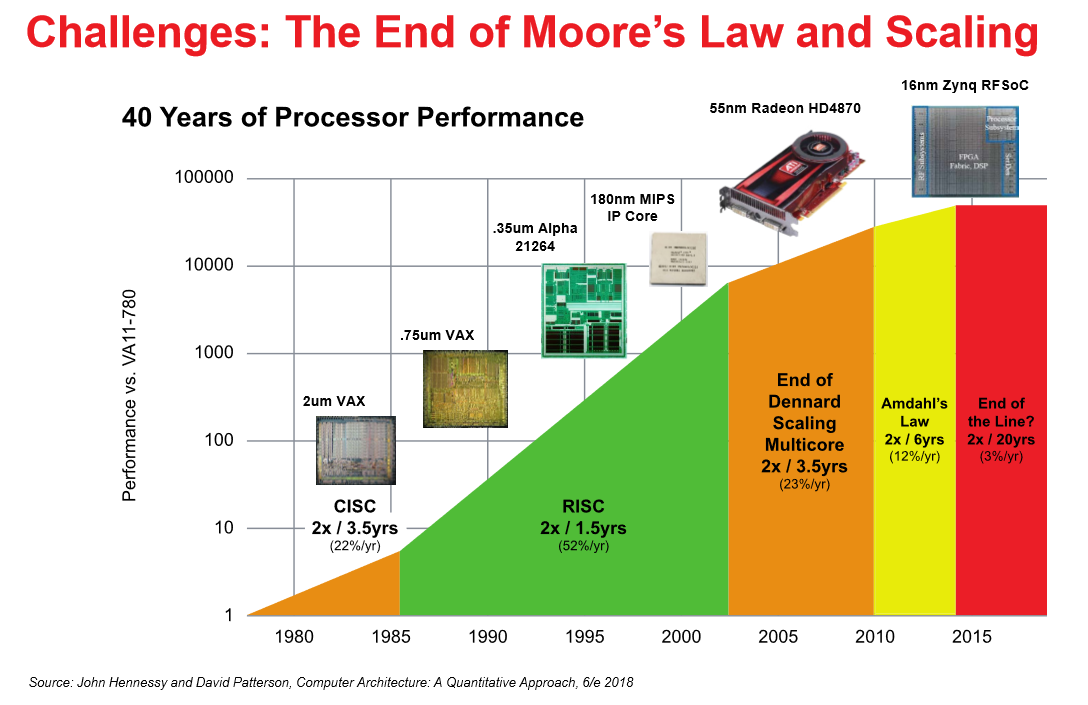

Self-driving cars is part of the larger picture in which data and compute demand is exploding at the same time that Moore’s Law is “running out gas,” Peng said. Hyperscale data centers are in hyper-growth mode and are projected to house more than half of all data center servers by 2021, according to the Cisco Global Cloud Index. At the same time, demand for machine learning and deep learning compute will grow apace, putting increasing pressure on what Peng calls the “power density problem,” which he said is increasing exponentially.

Power density in processing, he said, “across everything, from cloud to the edge to the end point truly is what’s limiting real application performance. And really that’s been true from when I was … at DEC (Digital Equipment Corporation, in the 1980s) building machines that were in data center environments, and it’s true for the FPGAs that we do today. It’s the only solution now, if you integrate this together. Demand for computing is greater than it’s ever been. Moore’s Law scaling is not working, you’re faced with this exponential curve in power density, which is limiting your systems performance, so architecture is really your main lever.”

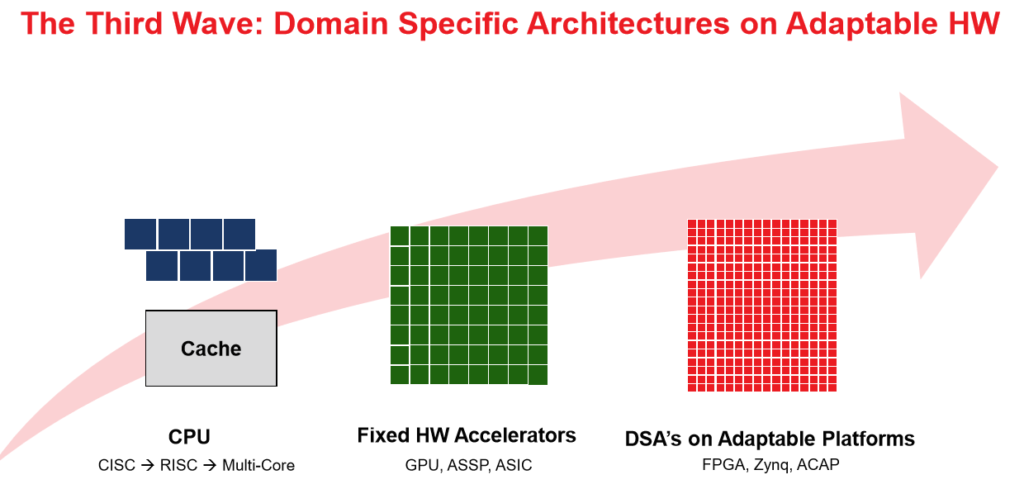

The era of CPU dominance began to erode around 2010, “when we started moving toward heterogeneous systems where the computing was split between the general purpose processor, and you began to see some fixed hardware accelerators – GPUs or other kinds of ASPs (application specific processors), like an MPU, and certainly there’s been a resurgence of ASICs, particularly around ML accelerators.

“We’re still in this sort of realm because as we know, for quite a while there’s been very little start-up activity, but one of the reasons there’s new investment in silicon is because they’re architecting new approaches to deal specifically with new problems – mainly, with machine learning.”

In a general sense, Peng said the right approach is the development of a physical hardware platform – the ACAP – that is dynamically reconfigurable and adaptable, according to workload, and comprised of “domain specific architectures” (DSAs), multi-core SOCs integrated with a programmable fabric, along with other processing resources.

In a general sense, Peng said the right approach is the development of a physical hardware platform – the ACAP – that is dynamically reconfigurable and adaptable, according to workload, and comprised of “domain specific architectures” (DSAs), multi-core SOCs integrated with a programmable fabric, along with other processing resources.

“The thing about this adaptability is it allows you to instantiate many DSAs in the form of an overlay architecture, so you can cover a wide range of applications, as well as accelerate the whole application and not just one portion of it, such as the ML aspect,” Peng said.

The ACAP, he said, is suited to the emerging era of pervasive intelligence in which there will be tens of billions of computing and sensor devices, all of them generating data – everything from IoT devices to sensors in cars, in streets, in factories, along with smart phones, PCs and so on.

“So not only will this be an enormous number of devices, which are all connected for machine intelligence, but they will be deployed across the planet,” he said, “so when you have that you just cannot have that be fixed (i.e., inflexible) because you can’t predict what all the needs will be as you deploy this, and you don’t want to have to deliver new capability to that infrastructure by changing the physical devices.”

At its core, according to Xilinx, the ACAP has a new generation of FPGA fabric with distributed memory and hardware-programmable DSP blocks, a multicore SoC, and software programmable, yet hardware adaptable, compute engines, all connected through a network on chip. An ACAP also has integrated programmable I/O functionality, ranging from integrated hardware programmable memory controllers, SerDes technology and RF-ADC/DACs, to integrated high bandwidth memory.

ACAP is a $1 billion bet by Xilinx and it’s been under development by more than 1,500 engineers for more than four years, according to the company. Customer shipments are scheduled for next year.

ACAP is a $1 billion bet by Xilinx and it’s been under development by more than 1,500 engineers for more than four years, according to the company. Customer shipments are scheduled for next year.

Xilinx said Everest is expected to achieve 20x performance improvement on deep neural networks compared to the company's current-generation 16nm Virtex VU9P FPGA.

The idea of a flexible platform that is adaptable to new intelligent devices at both the software and the hardware level remotely “is becoming more powerful and I would argue absolutely needed in order to realize its future.”

Peng cited the example of the autonomous car that can accommodate over-the-air updates.

“All the automotive OEMs are looking to make sure their future platform – the car is the platform for them – can do over-the-air updates that deliver new driver and passenger experiences even after the cars has been driven off the lot,” he said. “They can address problems, such as security flaws, over the air. That’s just an example of the aspect of why you want adaptable systems.”

Peng pointed out that ACAP is not solely focused on AI acceleration, in contrast with many start-ups, he said, who are focused on accelerating AI or a subset of deep neural networks. “That’s not really the whole problem,” said Peng. “You want to accelerate the entire application, some of which can have some portion of ML…”

An ACAP that can handle dynamic updates “even at the architectural level, without having to do silicon spins, you can innovate not at the cycle time of the silicon take out but at the cycle time of simply proving out the idea and then doing updates…”

Peng said that as computing evolves toward adaptive platforms, systems designers (and marketers) increasingly are searching “for inspiration for these new architectures in nature quite often, such as neuromorphic computing.”

He said the ACAP concept has traditionally been called “reconfigurable computing,” but “adaptive” might be the better term “because as you think about nature, the species that is the most adaptable is always the species that has the most resilience and flourishes over the long run. Species may be optimized for a particular environment and set of conditions, but once (those conditions) change, it’s not a viable species any more. So I think, from that perspective, of these things as adaptable platforms on which you can then both overlay specific architectures, along with other changes, without changing out the physical infrastructure.”