Data Dilemma: What to Store, What to Dump?

As storage costs decline on a Moore's Law curve, technology vendors seeking to drive storage capacity and enterprises leveraging that stored data for new applications agree that we have plenty of data available but remain woefully short on wisdom.

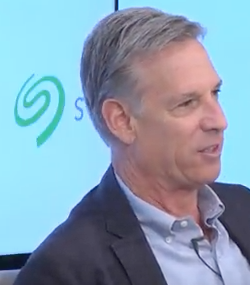

As more connected devices fuel the data explosion, a growing problem for storage and analytics providers is figuring out how to prioritize data as millions and eventually billions of devices are connected. "Too much data but not enough knowledge," is the way Steve Luczo, CEO of storage specialist Seagate Technology (NASDAQ: STX) described the dilemma during a company-sponsored panel discussion on Monday (June 5).

Seagate, Cupertino, Calif., also released the results of a report by market analyst IDC that forecasts data creation will soar to 163 zettabytes by 2025.

"From a storage perspective, we have to get ready for [zettabytes] in terms of available data" and deliver platforms "so the priority data can be stored," Luczo added. For users, it often comes down to, "What can you afford to store?"

One measure of the data flood is the number of embedded devices per person feeding into datacenters. IDC reckons that number will jump from less than one to more than four per "connected" person by 2025.

Even as consumers generate more data, that trend is expected to shift over the next decade as 60 percent of all data is generated by enterprises, according to the IDC study. Meanwhile, the number of use cases is growing, Luczo noted. "If we don't continue to drive down the cost of technology, then these use cases aren't going to develop."

Among those uses cases are consumer applications ranging from autonomous vehicles and other "edge" data platforms to entertainment and personalized medicine. For these applications, developers are attempting to bake in real-time data collection capabilities. "Even though you're not going to process all your data in real time, you have to collect it in real time because you may want to react in close to real time to what consumers are doing," noted Miguel Alvarado, vice president of data and analytics at Vevo, an online music video service.

With all that personal and unstructured data flying around, technology vendors and users alike are struggling to figure ways of organizing it to solve problems while making a few bucks in the process. "Really what we want to do is be predictive," Luczo observed.

As enterprises generate far more data over the next decade, other obligations arise as cloud vendors strive to protect data while extracting value from it. Unlike consumer markets, enterprise data "tends to be more siloed" due to security, privacy and other compliance requirements, noted Kushagra Vaid, general manager Microsoft's Azure infrastructure unit.

Hence, the steady analytics advances in the consumer sector ranging from sharing and learning from data may not apply on the same scale to the enterprise sector, Vaid stressed. "How do we keep parity between the advancements…in the consumer space and the enterprise space?" he asked.

Meanwhile, storage vendors like Seagate are working to increase areal density to pack more storage capacity into smaller devices. Densities are increasing at a 30-40 percent compound annual rate. At the same time, Vaid noted, cloud demand is rising at about 130 percent, creating a divergence: "The demand at which the cloud is growing is four times bigger than the rate at which the underlying storage technology density is growing."

Hence, he added, breakthrough storage technologies will be needed to increase areal density as scaling slows for existing approaches.

Data proliferation, especially "transient data" used mostly for real-time analytics, also raises questions about whether big data is approaching a point of diminishing returns. "We are data rich and knowledge poor," agreed Vevo's Alvarado, who called for industry standards on data collection.

"Nobody cares about video surveillance of an empty parking lot," stressed IDC analyst Dave Reinsel. Advocating a new form of data compression, Reinsel added, "Sure, we can collect a lot of data that's overwhelming but…we'll figure out how much data we'll actually need to make decisions.

"There's a learning curve that were going to struggle through," the analyst concluded. "We will make it manageable at the end of the day."

Related

George Leopold has written about science and technology for more than 30 years, focusing on electronics and aerospace technology. He previously served as executive editor of Electronic Engineering Times. Leopold is the author of "Calculated Risk: The Supersonic Life and Times of Gus Grissom" (Purdue University Press, 2016).