Wind Turbines and Democratized Data Analytics: Turning Chaos into Order

The energy industry was an early adopter of supercomputing; in fact, energy companies have the most powerful supercomputers in the commercial world. And although HPC in the energy sector is almost exclusively associated with seismic workloads, it also plays a critical role with renewables as well, reflecting the growing maturity of that vertical.

The largest wind turbine company in the world, Vestas Wind Systems of Denmark (2015 revenues: €8.423 billion; producer of 20 percent of global wind capacity), has worked extensively to harness not only the wind but also its data. The company has amassed 30 years of historical data that, Vestas boasts, is the “biggest wind data asset in the world,” one that grows daily with data collection from more than 50,000 turbines (newer models have up to 1000 sensors) installed around the world.

For the past 10 years, the company has built an increasingly sophisticated big data analytics infrastructure that has a strong element of democratization and self-service, putting data analytics into the hands of front-line soldiers. Analytics underpins operations throughout the company, from optimizing turbine performance, to product development, to service and maintenance – to enabling sales staff in sales situations to determine potential contract ROI in real time.

“We are utilizing data in every step that we take,” said Kim Emil Andersen, director, Vestas Performance and Diagnostics Centre. Speaking at Tibco Software’s recent Houston Energy Forum, he said, “We’re seeing enormous growth in (data), not only because we’re selling more turbines but because we’re selling turbines with more sensors. So we’re seeing an exponential growth in data.”

Data collection from turbines dates back to 1991, but it wasn’t until 2008 that Vestas became an early adopter of big data, when the company acquired it first distributed supercomputing system. Back then, Andersen said, the team he now heads “was hidden in the basement,” looking at data, looking into lost production, trying to apply data to ROI.

Data collection from turbines dates back to 1991, but it wasn’t until 2008 that Vestas became an early adopter of big data, when the company acquired it first distributed supercomputing system. Back then, Andersen said, the team he now heads “was hidden in the basement,” looking at data, looking into lost production, trying to apply data to ROI.

By 2012, when Andersen joined the analytics team, Vestas had amassed a large, cumbersome pool of data that “had tons of stuff floating around.” The company had created huge stacks of technologies — massive amounts of data storage and technologies to transform data with analytics using a combination of tools from Tableau, Matlab, Microsoft Power BI and Excel that were “creating a mess in our ecosystem,” according to Sven Jesper Knudsen, a senior data scientist at Vestas. “We had a massive number of applications, and it was very difficult to take the single Tableau dashboard, for example, and ramp up. When we were trying to accommodate added requirements, we often reached a dead end.”

Part of the problem, Andersen said, was lack of governance around how they used the various tools.

“There was no unity, no consistency, no understanding on how we should report on the different activities,” he said. “So if I asked a colleague to help on analysis, and then compared the outcome with something done by our group in Portland, OR, I got different results. So basically I had a lot of different tools, and they could actually provide answers in different ways, even though it was the same question…. We saw a big risk in providing wrong answers.”

Results were not only unreliable, the process was slow and strewn with bottlenecks. In a typical sales situation, when a salesperson was trying to work up a contract, an analyst would take in a request, do the analysis, and send it out by email. When the email arrived in the sales person’s inbox, Knudsen said, it was already obsolete because the customer requirements had changed. “The sales guy isn’t happy,” Knudsen said, “because he sits there with his million dollar contract and doesn’t feel we’ve taken him seriously.

“That comes at a cost… we simply couldn’t keep up,” Knudsen said. “The market had matured, and to stay ahead we needed a new platform.”

One of the first steps taken in coming to grips with Vestas’ sprawling data landscape: shut down access to data. Andersen said this was a decision that caused “a lot of debate around my decision, a lot of fuss from the VPs in the company, saying ‘You are prohibiting me from working and doing my daily job with my team.’” But it was an important step toward bring order and governance to the company’s analytics strategy.

Another step was bringing in Tibco, one of the larger business intelligent software companies, and its Spotfire analytics and business intelligence platform for analysis of data by predictive and complex statistics. Conversations with Tibco began in 2013, and last year, Vestas implemented 12 POC projects, all of them up and running in less than a month and providing value to “basically every part of the Vestas organization,” Andersen said.

The Spotfire platform takes data from various sources and formats, wrangles it into an assimilated pool, performs analytics and then visualizes outcomes in the form of dashboards.

The result, according to Vestas, is a flexible, interactive analytics capability that, rather than preconfigured dashboards, lets users drag and drop and conduct analytics dynamically, making a series of queries assuming different conditions and contingencies, against Vestas’ storehouse of data.

“Our relationship with TIBCO began when it became very evident that we needed a partner with the experience to unlock the vast amount of data in our super computer,” Knudsen said. “We wanted a single point of access where users could have a familiar experience creating an analysis, maturing it and then deploying it throughout the enterprise. Users want more of a smart app experience, with dropdown menus and interactions with graphs and the system behind them. They want that for advanced analytics, as well.”

Processing is handled by a Lenovo system based on their NextScale platform, according to Vestas. The system consists of hundreds of nodes, each with two Intel X2680v3 processors delivering 24 cores per node. It has 128 or 256GB of memory per node depending on the workload (simulation or analytics), and the nodes are connected by an FDR Infiniband interconnect with a 2:1 over-subscription, enabling the machine to solve distributed parallel problems that span all nodes. The system has multiple petabytes of storage with a bandwidth of more than 100GB/s.

As Spotfire has evolved at Vestas, Andersen said, non-specialists have become increasingly adept at using it, a self-service analytics environment with more than 1000 licenses used daily.

“Now, with on-demand analytics, the sales person does the whole analysis,” said Knudsen. “He can actually sit with the client and answer questions. Suddenly, we’re supporting the process — and actually, what goes on is a very complicated Monte Carlo simulation on 10 years of data, but he doesn’t know that.”

As the renewables industry takes an increasingly mainstream portion of the total energy picture (30 percent of its electrical power consumed in Iowa, for example, is generated by wind turbines), customer ROI requirements has increasingly come to the fore.

“We all know that renewables are very nice, but we also know it’s a business case,” Andersen said. “Most people that buy turbines also like to buy a business case.”

Vestas has developed tools that allow clients to look turbine and wind farm plants and assess performance against contractual expectations. If performance falls short, then Vestas sets out to determine the cause of the deviation, and whether compensation is due to the client.

He cited a customer whose wind farm was underperforming. “We’re going back and looking at what happening on the site, looking at the different turbine setting, service track records, all these things,” Andersen said. “Then it turns out that a neighboring wind park had been installed just in front of the main wind farm. So we see less wind at the site, we see less energy at the site, and obviously we see this deviation. So in this case we’re using analytics, using insights to explain to the client why the business case has degraded. We can’t do much about a situation like this.”

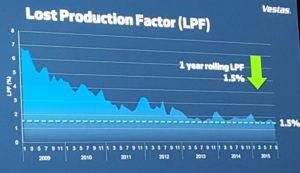

In applying analytics to turbine performance, the company developed a performance indicator called the Lost Production Factor, a variable measuring how much electrical production is lost from turbine assets due to non-optimization. Tracking and measuring performance enables Vestas to improve turbines’ orientation to changing wind strength and direction and to identify key indicators of turbine performance that require maintenance or replacement. In 2008, Vestas’ Lost Production Factor was 4.4 percent. Last year, it was 1.5 percent, which, according to Andersen, compares to an industry average of 3.6 percent. For Vestas customers, Andersen said, this translates into a savings of €150 million.

In applying analytics to turbine performance, the company developed a performance indicator called the Lost Production Factor, a variable measuring how much electrical production is lost from turbine assets due to non-optimization. Tracking and measuring performance enables Vestas to improve turbines’ orientation to changing wind strength and direction and to identify key indicators of turbine performance that require maintenance or replacement. In 2008, Vestas’ Lost Production Factor was 4.4 percent. Last year, it was 1.5 percent, which, according to Andersen, compares to an industry average of 3.6 percent. For Vestas customers, Andersen said, this translates into a savings of €150 million.

Lost Production Factor analysis feeds directly into product development. “Being able to understand and analyze our data, we can also make improvements to our products,” Andersen said. “We basically have millions of sensors out there. We have sensors measuring the environment, wind speed, wind direction, it can be different components (of the turbine), like rotational speed, vibrations, temperatures, oil pressure filters, all these things. For the most modern turbines have more than 1000 sensors on the turbines are sending data on a real-time basis.”