CacheBox Takes On Incumbents In Server-Side Flash Cache

A bunch of file system experts who worked on the Veritas clustered file system that was a mainstay of Unix deployments and that is now controlled by Symantec have teamed up to launch a new server-side solid state drive caching company called CacheBox. Rather than tie its caching software to a specific type or brand of SSD, the new CacheAdvance will optimize any flash-based storage inside of a system.

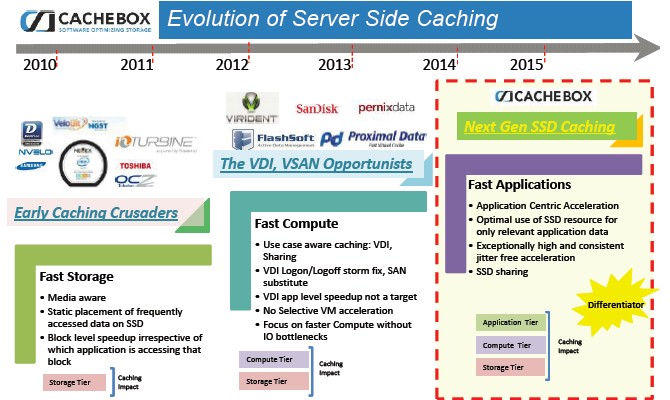

CacheBox is taking on a pretty deep bench of competition, some of which are also agnostic when it comes to tapping into SSDs and PCI Express flash cards and controlling how data is cached to these devices as applications and databases are running. The HGST flash and disk storage unit of Western Digital has just updated its ServerCache caching software, and now that it owns Fusion-io, SanDisk has the server-side caching tools it got through its February 2012 acquisition of FlashSoft and Fusion-io's ioTurbine caching software. ServerCache is, in fact, an amalgam of the caching software that HGST got through its Velobit and STEC acquisitions, and it is likely that SanDisk will eventually converge its own caching software, too. CacheBox is also taking on NetApp's FlashAccel, EMC's VFCache, Toshiba's SANRAD, which is built into its ZD-XL line of flash accelerator cards. There are others, and it is a crowded market.

John Groff, chief operating officer at CacheBox and one of the co-founders of the company, says that what will make CacheBox different is a focus on accelerating particular applications across a wide variety of flash storage inside of server nodes. Groff is joined by CacheBox CEO Lorenzo Salhi, who was vice president of enterprise and OEM sales at STEC, and Murali Nagaraj, vice president of engineering at the startup and formerly the lead developer of the Veritas Cluster File System. Many of the two dozen technical people working at CacheBox are in Pune, India where Veritas had its development lab and are experts on file systems.

"The gap between server and storage performance is widening, and server virtualization is making it worse," says Groff. This is, in part, due to the I/O blender effect, whereby relatively predictable I/O accesses on a single server get randomized when multiple virtual servers are put atop a hypervisor, which then accesses data in a more random fashion across its virtual machines. Virtual machine density is being driven up as core counts increase on servers, making the I/O blender problem even worse. "That is why we believe that caching is best done closest to the application."

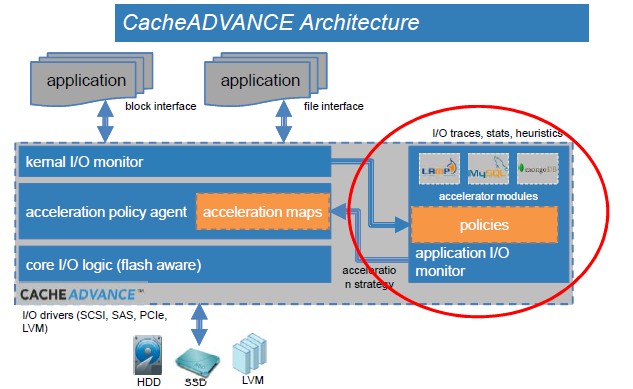

The idea behind CacheAdvance is to create application-specific modules (ASMs) that do heuristic analysis for those specific applications and their data access patterns as they run to accelerate reads and writes on flash media for those specific applications. This is in contrast to accelerating at the storage level in the hypervisor or operating system in a general sense. (To be fair, other server-side caching programs have been tuned for specific applications, with MySQL and SQL Server databases being the popular options.) Still, Groff contends that the company's CacheAdvance has finer-grained control to proactively manage caching policies at the block level of the storage hierarchy to get the hottest data closest to the application. That said, the acceleration is not presented to system administrators at the block or LUN level, but rather presented at the index, table, and file levels that they are used to thinking about.

At the moment, CacheAdvance is available for Linux platforms. It consists of a kernel driver for the Linux kernel (on the left side of the chart below) and the ASM runs as a userland component in the operating system, where it watches I/O access statistics, does I/O traces, and does heuristic analysis of access patterns to fine tune what data is stored on what tier of flash and disk storage in the system. CacheAdvance sits underneath the file system and anything that points to a block device can be accelerated. Any application with segmented I/O, such as streaming media, will not be accelerated by CacheBox. Anything with lots of random reads and writes or just lots of writes can be boosted, including object storage, cloud infrastructure, data warehousing, transaction processing, and virtual desktop infrastructure.

Groff says that a server needs around 40 MB of main memory to accelerate block I/O using CacheAdvance, and that it is lightweight in that it consumes only between 3 and 5 percent of CPU capacity as it runs. The software works with bare metal Linuxes at the moment, but there are plans to add support for the Red Hat KVM and VMware ESXi hypervisors. A Windows Server version of the software, which will also support the Hyper-V hypervisor, is in the late beta testing stages right now and will come out in the third quarter.

The first ASMs have been created to accelerate MySQL and MongoDB, which have well-documented performance issues, are open source, and are widely used – the best of all possible things to focus on for a startup wanted to accelerate applications. CacheBox will open up its APIs to allow companies to write their own ASMs and will also create more ASMs of its own as the market asks for them. The Cassandra NoSQL data store is already in development, and BerkeleyDB could be next. On the Windows Server platform, a SQL Server ASM will come first, as you might expect. Incidentally, if you want to run CacheAdvance on SSD-backed instances on Amazon Web Services or Rackspace Cloud, that works, too.

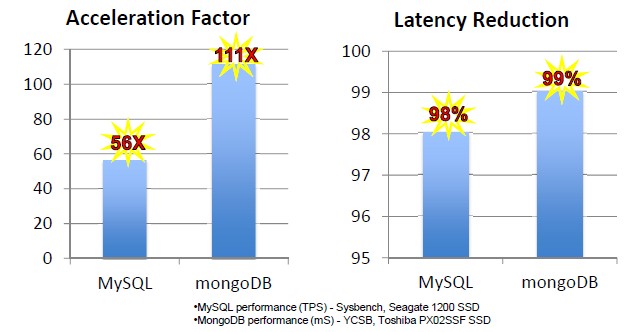

Here is what the performance boost for MySQL and MongoDB look like on server nodes running CacheAdvance:

The performance shown above compares single server nodes using 7,200 RPM disk drives to run MySQL or MongoDB and then adding in a flash drive and CacheAdvance. The MySQL test used a Seagate 1200 SSD and the MongoDB test used a Toshiba PX02SSF 200 GB drive.

In general, Groff says that on a range of workloads, CacheAdvance can provide a 10X to 100X performance boost over all-disk setups and deliver between 85 percent to 95 percent of the performance of an all-flash array underpinning a system with a fraction of the flash capacity front-ending those disk subsystems inside the server or disk arrays attached to them. This is the speech that all hybrid flash-disk array makers give, although the performance gains and flash capacity numbers obviously change case by case.

CacheAdvance is available now and has a list price of $999 per node for an annual software subscription. The price is the same regardless of the size of the server or the type or capacity of the flash storage in the system.