Dell Forges Custom G5 Racks For Hyperscale Shops

Dell HQ

As funny as it sounds, the standard computing rack is a problem in the datacenter. The physical layout of datacenters is pegged to the dimensions, weight, power draw, and cooling capacity needs of a rack. Hyperscale datacenter operators have been asking their partners not just for custom servers and storage to fit their needs, but for custom racks that do a better job of cramming more iron into the same space.

To that end, the Data Center Solutions unit of Dell, which makes customized machinery for some of the largest hyperscale operators in the world, has crafted a new rack, called G5, and some server and storage trays that slide into it. The G5 racks were in beta testing in December and are now shipping out to customers.

While a number of mainframes, supercomputing systems, and clusters have come to market in non-standard racks over the years, hyperscale datacenter operators have generally stuck with the standard 42U rack because these roll into co-location facilities and their private rack cages with ease. But in a world where density matters because space is expensive and constrained, hyperscale datacenter operators have been either completely rethinking the rack. Rackable Systems, now part of SGI, based its business on an innovative rack design that put two banks of half-depth servers on both ends and created a chimney in the middle to get the heat out. Facebook has created its own triple-tower and single-tower Open Rack designs. Alibaba, China Telecom, Baidu, and Tencent have created their own rack design, called Project Scorpio, in conjunction with Intel and addressing specific needs of the Chinese market that is similar, in some respects, to the OCP single and the Dell G5 racks. And, as EnterpriseTech reported last month, Fidelity Investments has done a substantial amount of engineering to create what it has called the Open Bridge Rack, a 48U rack that can support standard 19-inch server and storage enclosures or the 21-inch enclosures used in OCP gear by flipping around the rails on the inside of the rack.

Greg Gibby, product marketing manager for the Data Center Solutions group at Dell, showed off the new G5 rack to EnterpriseTech. This one is pretty tall at 52U, and like the Scorpio rack, it can accept 21-inch wide enclosures for servers and storage and yet still be no wider than a typical 42U enterprise rack, which is in the neighborhood of 30 inches, depending on the vendor.

The G5 rack can have multiple power bays, depending on the need of the customer, and supports up to ten 1,600 watt power supplies per bay. Gibby says that the design would allow for up to 35 kilowatts of power to be available in a single rack, but that the typical DCS customer will draw something on the order of 14 to 16 kilowatts. For heavy compute workloads, customers could push up as high as 25 to 30 kilowatts, and for storage workloads, the constraint is not power, but rather weight is. Anything above 3,000 pounds per rack causes problems on raised floors or just moving the racks around and into place in the datacenter. (This is one reason why Facebook created a single tower version of the Open Rack. For storage, a triple rack weighed more than 5,000 pounds and was just too heavy and dangerous to move around.)

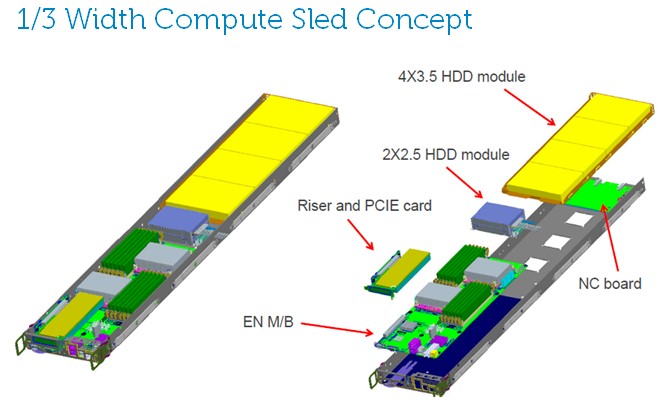

In an all-server configuration, the G5 rack can hold get 120 servers using one-third-width server nodes. Customers can also deploy more heavily configured half-width servers, or full-width trays for storage. When loaded up with storage enclosures, the G5 rack can hold 480 disks and their storage controllers, and with the new 6 TB disk drives from Seagate, due in April, a G5 rack will be able to hold up to 2.8 PB of capacity.

Right now, a custom server code-named "Odin" based on Intel's "Romley" Xeon E5 platform is the only server option for this G5 rack. Interestingly, the Odin server uses Intel's "Sandy Bridge-EN" Xeon E5-2400 processor, the one with lower clock speeds, fewer core counts, and lower prices than the Xeon E5-2600 processors that are commonly deployed at enterprises. Most of the customers using the G5 racks and the Odin servers are deploying it to run Hadoop or search indexing or raw compute for clouds, so they don't need the heavy compute.

Intel has not launched an Ivy Bridge-EN variant of the Xeon E5 yet, and just rolled out the Xeon E5-2600 v2 processors last September. But DCS has not made a system for the G5 racks with these Xeon E5 processors. The company is looking at the "Haswell-EP" Xeon-2600 v3 chips, which are slated to be available later this, as a possibility based on customer demand, of course. DCS is a business that designs and builds to order, so it will do what customers want.

The 12-volt power rails that the server and storage enclosures plug into have a difference mechanical connection than the ones used in the OCP effort, and Gibby says Dell is examining how to make the power rails in the G5 rack compatible with OCP machines.

An interesting feature of the G5 rack is a set of software called Infrastructure Manager, which can be used either with or without a baseboard management controller (BMC) in the system nodes. This software, which has not yet been commercialized by Dell, gathers up all of the telemetry from the systems – inlet air, outlet air, CPU, and memory temperature as well as various utilization rates on components, to name a few – and presents it at a system and rack level. Managing at the rack level is key to hyperscale datacenter operators, and in fact, to a certain extent, the rack is the new system.

"What customers tell us is that when they have a server working real hard, the last thing they want to do is put a power cap on it," says Gibby. "They want to keep that server busy, but cap somewhere else with Infrastructure Manager."

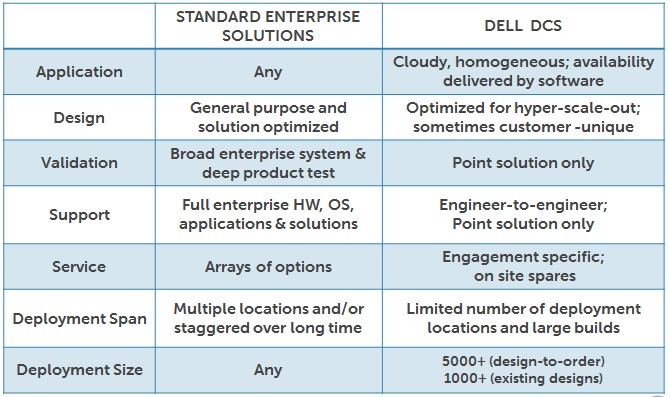

While Dell DCS is at the moment focused on the top 30 or so hyperscale datacenter operators, if their orders are big enough and their computing needs are special enough, large enterprises can engage with DCS. Here are the differences between the regular Dell development and sales channel and DCS:

The question now is this: Will large enterprises build their own system support teams and be more like Google, Facebook, Rackspace Hosting, Amazon, and others, or will they continue to rely on tier one server makers like Hewlett-Packard, Dell, IBM, Cisco Systems. The jury is still out on that one, and EnterpriseTech is here to tell you all about it.

Gibby, for one, cautions that DCS is not set up to make and deploy machines on the scale of hundreds of nodes. "I will never say never, but our business model is not set up that way," he says. Hyperscale customers place big orders in lots of 5,000 or 10,000 machines many months in advance; large corporations place orders for hundreds or thousands of units and they want them in six weeks or less.

A big question, then, is whether large enterprises who have many thousands of nodes need all of the extra goodies and services they have become accustomed to as part of the systems they buy from the tier ones. Will they realize they don't need a lot of this stuff?

"Selfishly, I certainly hope so," says Gibby. "I want our DCS business to take off and have more scale. But I have not seen that trend take off yet. And in fact, we created the PowerEdge-C to create that interim step between somebody who is taking a customized solution and somebody who is not there yet but kinda headed there."