Tilera Rescues CPU Cycles with Network Coprocessors

Should an X86 processor be running network functions, or should those jobs be offloaded to a coprocessor? Tilera thinks it is time to get that work off the CPU and onto chips designed specifically to do this work.

A few years back, Tilera took a direct run at server workloads with its TILE-Gx chips and had some limited success. The company had better traction selling its many-cored RISC processors in various networking equipment. With its new TILEncore coprocessors, Tilera is making an oblique attack on servers that is probably going to resonate better with companies that have a combination of heavy compute and heavy network processing in their applications.

Hyperscale datacenter operators that control their own application and operating system software stacks – think Google, Facebook, Amazon, and so on – are the initial targets for the TILEncore coprocessors. But over time Tilera expects that enterprise customers will want to accelerate their network functions, too, particularly for private clouds where the virtualization overhead can be extreme. It remains to be seen if the tier one server makers will pick up the TILEncore coprocessors and add them to their systems, but it is a possibility if the idea takes off.

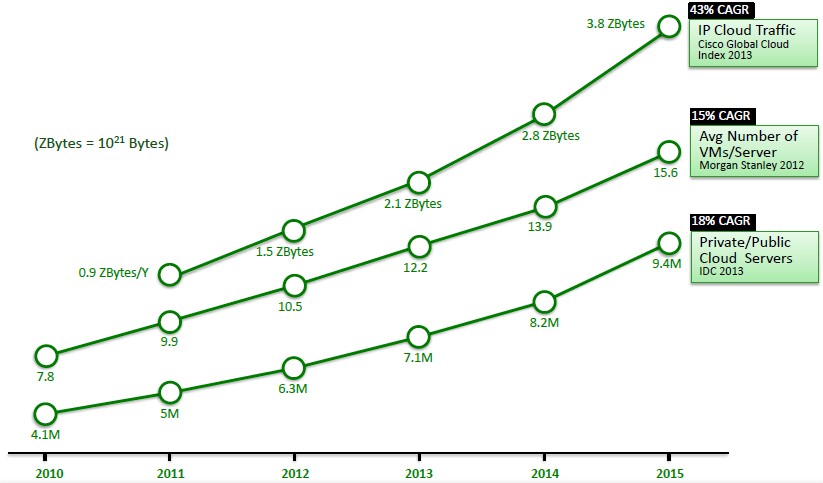

"There are a couple of big problems here," explains Bob Doud, director of marketing at Tilera, who shared this chart showing projections for bandwidth capacity, virtual machine count, and server count in datacenters over the next several years:

"If you look at single-socket compute, it is not keeping up," Doud says." We have moved from eight to twelve cores over a period of three years, but clock frequencies have pretty much hit the wall and are not likely to go much higher. Sure, microarchitectural improvements are happening, but you are not getting the kind of massive growth in computing that tracks the growth in the datacenter in terms of traffic or the number of virtual machines. It is a pain point, and that pain is now being solved by racking up more and more servers."

In doing that, companies are increasing both their capital costs as they have to buy more servers and their operating costs as the datacenter has to power and cool all of that extra iron.

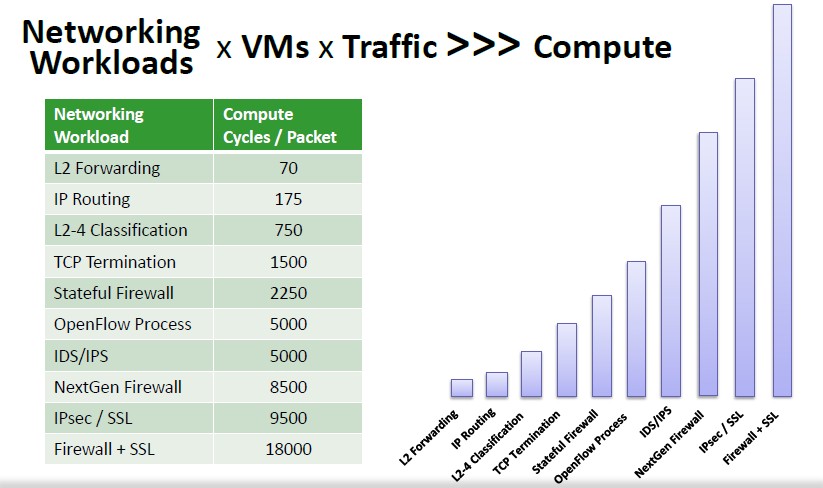

But this is not the only problem. There is another trend that is putting pressure on servers, and that is software-defined networking. With SDN, the idea is to bring network functions onto the servers and get them out of dedicated appliances that are costly and less agile. This is great for bringing flexibility and scalability to the network inside of datacenters, but there is a price to be paid. Running these network functions on generic X86 processors consumes a lot of computing capacity – clock cycles that might otherwise be dedicated to running actual applications. Here is an interesting set of data that Tilera has gathered up to show how many clock cycles it takes to process a packet of data for various network functions:

"This is not the best use of the machines," says Doud. "X86 chips were not designed for networking workloads."

The TILE-Gx chips, however, were. And now they are available in a family of coprocessors that can be tucked inside servers and with a little programming work can take on a lot of the processing associated with the data plane portion of the network stack. The workloads the new TILEncore coprocessors can run include deep packet inspection, firewalls, encryption, routing, and Layer 2 forwarding, among other things.

To give an idea of how much overhead networking places on servers, Doud gives an example of a virtualized server running VMware's ESXi hypervisor. About 70 percent of the processing capacity of the server processors is dedicated to these network data plane functions, with only 30 percent left over for running the virtual machine and its operating system and applications. But by offloading most of these functions to a TILEncore coprocessor inside of the server, these data plane operations only take up about 18 percent of the server processor capacity, freeing up about half of the server capacity to actually run the VM and applications. To put that in plain English, there is 2.7 times as much server compute that can be allocated to applications by offloading some of the network functions.

The idea of using coprocessors is certainly not a new one, and the success or failure of the approach always comes down to the economics of a hybrid solution and the ease of programming to do the offloading. Offloading network functions into Ethernet or InfiniBand adapter cards was relatively easy, but still required that drivers be written for operating systems so that the offload functions could be invoked by applications. (TCP/IP offload is a popular one for network adapters.) For GPU coprocessors, which are mostly used for floating point calculations, compilers had to be tweaked to understand how to dispatch parallel portions of code to the GPUs. Coprocessing is never easy, or transparent. But sometimes, taking a hybrid approach makes the best sense in terms of the cost, density, and thermal envelope of a system.

Making the programming job easier for a coprocessor is probably the most important aspect of any hybrid setup, and the fact that the TILE-Gx processors have run Linux and support the GNU C and C++ compilers as well as Java helps a lot. Tilera also supports the KVM hypervisor for carving up secure partitions on the multicore Tile-Gx chips, and Tilera has its own hypervisor as well if you want to use an even thinner abstraction layer to isolate workloads. The company is also working with third party software developers to port key network elements, including a TCP/IP stack, an Open vSwitch virtual switch, deep packet inspection, intrusion detection and prevention, network analytics, a software-defined network interface card, and network analytics to the TILEncore coprocessors.

The Linux stack above is familiar to the hyperscale datacenter operators and companies that might want to build hybrid X86/TILEncore systems themselves. Plenty of high-end enterprise customers are also deeply familiar with these tools, and so are telecommunications, service provider, and financial services firms who are expected to be early adopters of the TILEncore coprocessors.

In financial services, the early adopters will probably come in high frequency trading.

"HFT is a hot spot for us," says Doud. "We get a lot lower latency than plugging any sort of network interface card into an X86 machine. And in fact a lot of the transactions can be executed right on the Tilera chip and never even hit the host. These guys are very sophisticated, and in many cases they are running very complex algorithms on a quad-socket beast. But by adding a TILEncore, you got it all: You have low-latency I/O, you can do some transactions right on the card, and you can run those big algorithms on the fast threads of an X86. It is really about bringing the right kind of compute to bear on different aspects of the application."

One early adopter of the TILEncore coprocessors that can be publicly cited is French hosting provider OVH, which has 150,000 servers installed. The French service provider is using the TILEncore coprocessors in its network to protect its customers against distributed denial of service attacks; the company is looking to offload intrusion detection functions to the coprocessors at some point in the future.

There are four different TILEncore coprocessor cards, which have a varying number of cores, network ports, memory capacity, and other features, as shown below:

The top-end Tile-Gx72 processor is used in the heftiest configuration of the TILEncore coprocessors, and the chips are given the name TILEncore to keep them distinct from the Tilera chips aimed at servers. This TILE-Gx72 chip has 72 RISC cores that run at 1.2 GHz, all linked together by a coherent mesh network that has 18 MB of L3 cache and another 5 MB of L1 and L2 cache embedded in those cores. The chip is implemented in a 40 nanometer process and is etched by Taiwan Semiconductor Manufacturing Corp, and has a thermal design point of 75 watts. It has four DDR3 memory controllers that can use 1.87 GHz memory and has a sustained memory bandwidth of 60 GB/sec. The chip has configurable XAUI ports that can deliver 32 Ethernet ports at 1 Gb/sec or eight ports running at 10 Gb/sec.

The TILEncore coprocessors are available now. Tilera is not divulging its pricing for the coprocessors, but obviously the dollars have to make sense. If a reasonably configured server costs $5,000 to $6,000 and a Tilera coprocessor can give half of its cycles back, then a TILEncore card should cost something on the order of $2,500 to $3,000. This is about the same as the street price for an Nvidia Tesla GPU coprocessor, by the way.