Materials Upstage the Human Genome Project

As research in Kevlar, lithium-ion batteries and graphene has shown us, new materials drive new technologies both for their manufacture as well as their applications. But the introductions of these so-called “game-changing” materials are few and far between, with new materials requiring an average of 18 years to reach the market.

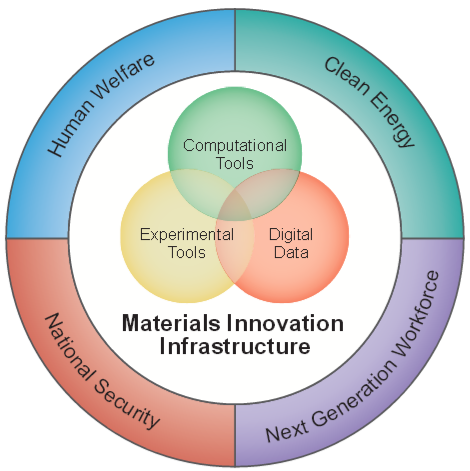

Hoping to cut down on this time is the Materials Genome Initiative, the younger brother of the Human Genome Project—one of the most well-known scientific research projects of the twenty-first century.

Hoping to cut down on this time is the Materials Genome Initiative, the younger brother of the Human Genome Project—one of the most well-known scientific research projects of the twenty-first century.

According to the project leaders, this work could potentially upstage the Human Genome Project because it’s even closer to application now than the Human Genome Project was in 2001, and could have a profound impact on the U.S. manufacturing sector.

Now, two years after the President launched the Materials Genome Initiative, the Obama Administration has announced additional commitments that could help the initiative to make that difference even sooner.

The commitments range from new research institutes to online courses, but one public-private effort has stepped into the spotlight. There, the DOE’s National Energy Research Scientific Computing Center (NERSC) at Lawrence Berkeley National Laboratory in collaboration with MIT has joined forces with Intermolecular, Inc., to deliver an open-platform webtool that will act as an encyclopedia for researchers looking to predict material behavior. The effort is called the Materials Project, and so far it represents the leading edge of materials research.

When asked about the 18-year incubation period for new materials, Gerbrand Ceder, leader of the Materials Project said, “We should do better.” While Cedar, professor of materials science and engineering at MIT, is best-known for developing speedy lithium batteries, he has now turned to a project that could help materials researchers regardless of their areas of interest. Within his work with the Materials Project, he aims to cut down on materials development time and perhaps fundamentally alter U.S. manufacturing.

As it stands, conceptualizing and commercializing a new material takes so long largely because of the time required to identify all of a material’s components. These efforts were built around individual experiments designed to single out a single beneficial or potentially dangerous property in the material—effectively, trial and error.

Ceder’s project aims to take the guesswork out of the development cycle by employing a materials database that will house the properties of hundreds of thousands of compounds, helping to make all the properties of a material evident before testing even begins, saving countless hours in the process.

With the support of supercomputers at NERSC, the Berkeley Lab Lawrencium cluster, and systems at the University of Kentucky, the researchers are taking a genomics approach to characterize and catalog each property of a new material. In effect, The Materials Project is looking to take the guesswork (and a great deal of time) out of the equation. With this high-throughput capacity, Ceder’s group expects to run approximately 200 calculations at a time, and will fully screen as many as 100 materials per day. This means that analyzing the properties of nearly 100,000 inorganic compounds that scientists have already could actually be an achievable goal.

Making use of this compute power are computational chemistry algorithms that use physics equations to predict the behavior of a material. Each calculation is based on density functional theory, which provides a framework for breaking a material down into identical cells arranged in a defined pattern. Most desktop computers could easily calculate a material comprised of two-atom cells, but when you get up to 1,000 atoms, it’s time to call in the big guns.

Ceder is putting this to work by simulating properties relating to,say, voltage or band gap, and determining which materials are best suited for a battery or solar cell before the material is even fabricated.

Intermolecular has stepped in to contribute experimental data that can help to create a comprehensive database even more quickly. The company, based in San Jose, California, has been using proprietary high-throughput combinational experimentation for some time, which will now be put to use in labs within MIT and Berkeley Lab.

“Access to high-quality experimental data is absolutely essential to benchmark high-throughput computational predictions for any application,” said Berkeley Lab scientist Kristin Persson, co-founder of the Materials Project. “We begin every materials discovery project with a comparison to existing data before we venture into the space of undiscovered compounds. This is the first effort to integrate private sector experimental data into the Materials Project, and could form the basis of a general methodology for integrating experimental data inputs from a wide-range of scientific and industrial sources.”

Persson explained that for researchers throughout the public and private sector, having experimental data in the Materials Project can help in two ways. First, they will use the data as a reference point against which they can measure and adjust their methodologies to get the most accurate information as possible.

Secondly, it allows for the direct comparison between experimental and predicted values. “It builds confidence in our predictive models for our users if they can see the values agree,” she said.