The Evolution of Green Data Center Optimization

Over the last few weeks, Green Computing Report has shone the spotlight on various optimization methods, including Google’s research to improve their air cooling system, and research out of Singapore that sings the praises of a database management system called ReinDB, among others.

What is rarely mentioned is the journey leading to the discovery of these optimization solutions. After all, the focus on environmentally optimizing data centers is relatively new, meaning the related research is equally thin.

What is rarely mentioned is the journey leading to the discovery of these optimization solutions. After all, the focus on environmentally optimizing data centers is relatively new, meaning the related research is equally thin.

A research team out of Arizona State is trying to reverse that trend in their paper on how evolutionary algorithms (EA) could and should impact the future of green computing. According to the team, “Evolutionary Algorithms are efficient, nature-inspired planning and optimization methods based on the principal of natural evolution and genetics.”

Data centers are, to a certain extent, inefficient. If everyone were happy with how everyone’s data centers ran, both from an energy efficiency and an environmental perspective, there would not exist such a thing as Green Computing Report.

Alas, GCR exists and so too do inefficient data centers. To limit such inefficiency, the team presents the possibilities behind EAs. “These techniques,” the paper explains, “are well-known for their ability to find satisfactory solutions within a reasonable amount of time. This makes them especially suited for solving the complex problems of green computing.”

Before tackling what constitutes the ‘complex problems of green computing’ and how evolutionary algorithms can affect them, the principle behind EAs in general should be explored. Per the ASU research, EAs are essentially algorithms that follow the natural selection and ‘survival of the fittest’ principles that have guided biological evolution for millennia. “Given a population of individuals,” the research noted, “a guided random selection causes natural selection and this causes a rise in the fitness of population to achieve the desired goal.”

The team identifies three specific areas where EAs can be deployed to cull more optimized results, including data centers, wireless sensor networks, and body sensor networks. The focus here will be on data centers but the other two examples are important as well.

Evolutionary algorithms are best suited for complex optimization problems like finding the best data center set up because they are more likely to find unique solutions to problems that people have yet to consider. By definition, an EA begins by randomly selecting potential solutions that are sometimes seeded by what makes intuitive sense.

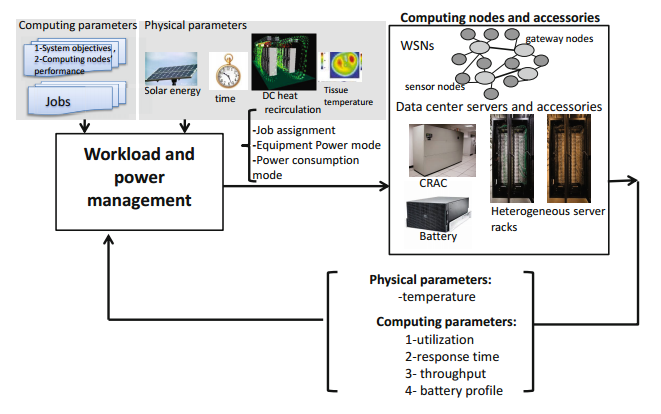

They are also designed to take into account the numerous trade-offs that occur when considering optimizing one’s facility for green. Below is a diagram of the individual sets of variables evolutionary algorithms (or any algorithms for that matter) would have to take into account.

As noted in previous articles in GCR, sometimes an institution will have to make a decision to sacrifice a modicum of performance for efficiency. The research corroborates that notion, saying that “software schemes exist that address green computing in DCPS by taking into account only the cyber behavior while ignoring the physical behavior. Such schemes usually trade energy for other system performance objectives such as the jobs response time.”

As such, energy considerations often affect latency and throughput in manners that are ill-understood when applied theoretically. Theory versus practice (or the lack of practice) ended up being a theme, as most papers on the subject have so far centered around simulations and have yet to translate into real-life applications.

Either way, such applications would include the trade-off among energy, latency, and throughput. The team delved into such details, noting that “the performance objectives of a distributed system are either jobs’ perceived performance (i.e. latency of a job) or the cumulative performance of all jobs (e.g., the average latency of all jobs and throughput of the system) or both. These objectives are not usually independent.”

Efficiently cutting down on a facility’s hot spots (areas where the expulsion of extra electrical heat reaches a maximum) is a good example of where EAs can be useful. An example of such an application is shown below.

One of the factors that the team determined was useful through EAs was a server’s idle time. More specifically, they found that the time since the machine was operational was important to the build-up of hot spots in a facility. “In data centers, an idle node that has been idle for a long period of time will heat up more slowly than an idle node that only recently stopped running tasks. Thermal safety aware workload placement requires minimizing the worst case (hot spots).”

One of the factors that the team determined was useful through EAs was a server’s idle time. More specifically, they found that the time since the machine was operational was important to the build-up of hot spots in a facility. “In data centers, an idle node that has been idle for a long period of time will heat up more slowly than an idle node that only recently stopped running tasks. Thermal safety aware workload placement requires minimizing the worst case (hot spots).”

There do exist, however, a few limitations behind using EAs, chief among which involves determining which solutions are actually best. The definition of ‘optimal solution,’ or a solution that survive the evolutionary process, is loose at best since relatively few practical tests have been run.

“To ensure the performance of EA schemes in practice, a comprehensive simulation/experimental study is required which examine the performance of solutions over various type of input data. Most of proposed solutions are not properly evaluated,” the research noted in its conclusion.

Another limitation involves the amount of time it takes for evolutionary algorithm to produce a ‘winner,’ or workable optimal solution. “Such problems turn out to be NP-hard, where the optimal solution cannot be calculated in a time efficient way. Therefore, literatures develop heuristic and meta-heuristic solutions using EA.”

With that being said, for the most part, EA-found optimizations do end up finding encouraging returns. “Generally, EA based solutions are shown to have higher performance than their heuristic counterpart, but they may take longer time to complete.”

Related Articles

Google Uses PUE Properly in POP Optimization

Does Green Sacrifice Performance for Efficiency?