Floating All Boats: A Conversation with NCSA’s Merle Giles

If you're simulating the universe, it helps to have a machine like NASA's Pleiades supercomputer with its 112,540 cores and a peak speed of 1.33 petaflops. Or perhaps you want to explore what happens in a core-collapse supernova. You can begin your simulation with a modest 4,000 cores on the Oak Ridge National Lab's 1.75 petaflop Cray supercomputer and then scale up to 64,000 cores to render a highly realistic look at the violent magnetic activity inside the exploding star.

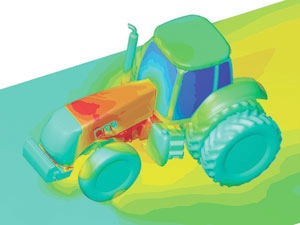

But if you want to use a supercomputer to design a better aircraft wing or a state-of-the-art farm tractor, you have a different set of problems to contend with – and all those cores and all that speed may be more of a hindrance than a help.

But if you want to use a supercomputer to design a better aircraft wing or a state-of-the-art farm tractor, you have a different set of problems to contend with – and all those cores and all that speed may be more of a hindrance than a help.

Engineering vs. Scientific Discovery

Since being appointed director of Private Sector Program (PSP) at the National Center for Supercomputer Applications (NCSA), University of Illinois, in 2008, Merle Giles has learned a lot about this dichotomy. The charter of his organization is to help business and industry solve real world problems using the center's high performance computers, high tech innovations and infrastructure, and expert staff.

NCSA has been in the news lately with the announcement of one of those high tech innovations – the NSF-funded Extreme Science and Engineering Discovery Environment (XSEDE), which expands and replaces the TeraGrid project. NCSA's John Towns, who heads up the project, said that this integrated collection of advanced digital resources and services is a "distributed cyber infrastructure in which researchers can establish private, secure environments that have all the resources, services, and collaboration support they need to be productive."

For Giles, XSEDE is a grand undertaking, but it is just one element in a larger vision of working with industrial users, both large and small. Although XSEDE will help some of these companies, the project is primarily a government funded response to the needs of the academic science community. And academics and engineers have a very different take on the world.

For Giles, XSEDE is a grand undertaking, but it is just one element in a larger vision of working with industrial users, both large and small. Although XSEDE will help some of these companies, the project is primarily a government funded response to the needs of the academic science community. And academics and engineers have a very different take on the world.

Says Giles, "Recently I heard a researcher from a federal lab speaking about scale and what he focused on was core count. He implied that if you're looking for a government grant, scale is everything. If you're not running at least 30,000 cores at a time, don't bother applying."

Engineering, he claims, is different – a claim based on working with companies like Boeing, BP Caterpillar, John Deere, GE and Procter & Gamble and organizations like the Washington DC-based Council on Competitiveness.

"We have observed that the science behind the HPC challenge for engineering is not well understood, " he says. "As a result, we have this myth that more cores are better."

Giles points out that at NCSA, the Partner Program includes a number of power users employing HPC for engineering with core counts that would cause members of the academic community to turn up their noses. But, he says, there is a lot of excellent work being done using relatively few cores running very sophisticated codes.

Navigating the Speed Bumps

So if access to machines with staggering core counts is not a major barrier to leveraging HPC for engineering projects, what is? High on the list of Giles' stumbling blocks is access to the software itself – and that means licensing.

Earl Dodd, executive director of the Rocky Mountain Supercomputer Center, wrote in HPC in the Cloud earlier this year, "As techies, we get excited about the latest processor, newest chips and fancy hardware, but it's easy to forget that software is one of the most critical aspects of our business. Whether delivered as a service in the cloud or as a seat in an enterprise site license, software applications bring value to the end user. Unfortunately…the traditional licensing models that govern most software packages do not adapt well in the cloud." Or in more traditional settings, ranging from desktop workstations to dedicated HPC clusters.

One of Giles' smaller manufacturing customers is typical. The company is running code from a large, well-known ISV on an NCSA cluster, but all they can afford is a license to run on a single node. The result is a very coarse grained model that is not conducive to much useful work. The licensing situation is also not conducive to motivating small- to medium-sized manufacturers (SMMs) to begin using HPC modeling and simulation as an integral part of their product lifecycle management (PLM) processes.

Giles is working closely with the Council on Competitiveness and its NDEMC (National Digital Engineering and Manufacturing Consortium) initiative. NDEMC is initially focused on setting up pilot projects in the Midwest to bring the benefits of advanced digital manufacturing techniques to the SMMs. ISVs participating in the consortium are expected to relax many of their licensing restrictions, thus lowering or eliminating that barrier.

However, licensing is not the only hurdle. In many cases, the user interface, especially for the uninitiated, is daunting – "It's like the old days of the DOS command line," says Giles. "If you don't get your syntax right, it's the end of the game."

He also points out, many of the ISVs who serve the CAE and CAD community have their roots in writing code for PCs and desktop workstations – HPC and parallelization are not part of their skill set. Rather than trying to teach them to understand numerical analysis and the ins and outs of HPC, Giles proposes an end-to-end solution that takes into account the ISV's PC heritage and leverages visualization, pre- and post-processing and other techniques designed to move them up the on-ramp leading to the use of advanced digital manufacturing tools.

Know Your Science

But all the tools in the world will not help the OEMs, SMMs and ISVs make full use of the technology being offered by NCSA if they do not have the necessary domain knowledge and understand the physics involved. For example, the tendency is to regard CFD (computational fluid dynamics) as one broad category. But Giles, surveying several industrial partners a few years ago, noticed that two companies running CFD simulations had wildly different resource utilization for jobs that seemed relatively similar. The answer was in the physics – specifically, the classes of fluids involved. Given the complexity of the physics, it is far easier to parallelize software for compressible fluids such as air than for incompressible fluids like oil. For this reason, two manufacturers, both employing CFD, can find their scaling requirements are very different – a few cores vs. thousands of cores.

As part of providing the best, most accessible and cost effective HPC services to the center's OEM, SMM and ISV partners, the NCSA scientists and engineers are constantly fine tuning the center's clusters and supercomputers to optimize running commercial codes such as Abaqus from Simulia or Ansys Fluent.

Recently the center worked with a power user to optimize engineering code running inside a single node. The NCSA Partner Program team applied a mix of general scientific and specific domain knowledge, added a dash of HPC and parallelization expertise, and topped it off with consulting capabilities to, as Giles puts it, "to tame this beast."

The upshot? What was a 160 hour job was reduced to three hours.

"At NCSA our goal is to lift all boats – the OEMs, SMMs and ISVs, Giles says. " We are working with all three communities to remove the barriers to their use of HPC. In particular, the impact on the SMM community will be huge if we can get past the licensing hump and adapt the most-used codes to run on high end workstations. We are approaching this not by stressing core count but by concentrating on ease of use, a thorough understanding of the scientific and engineering aspects of the jobs, and fine-tuning our hardware and software to achieve optimum performance."

The video below features Merle Giles describing the NCSA Private Sector Program.